list_del_init是将自身驱动模块从驱动列表(lsmod)中抹掉

kobject_del是将自己从/sys/class/xxxxxx中抹掉

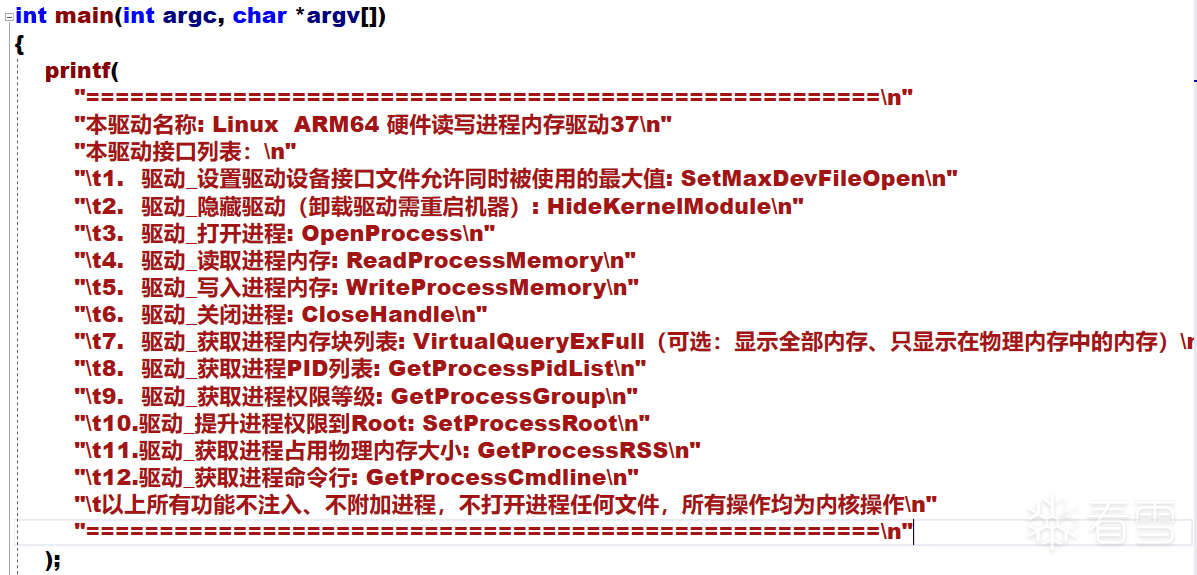

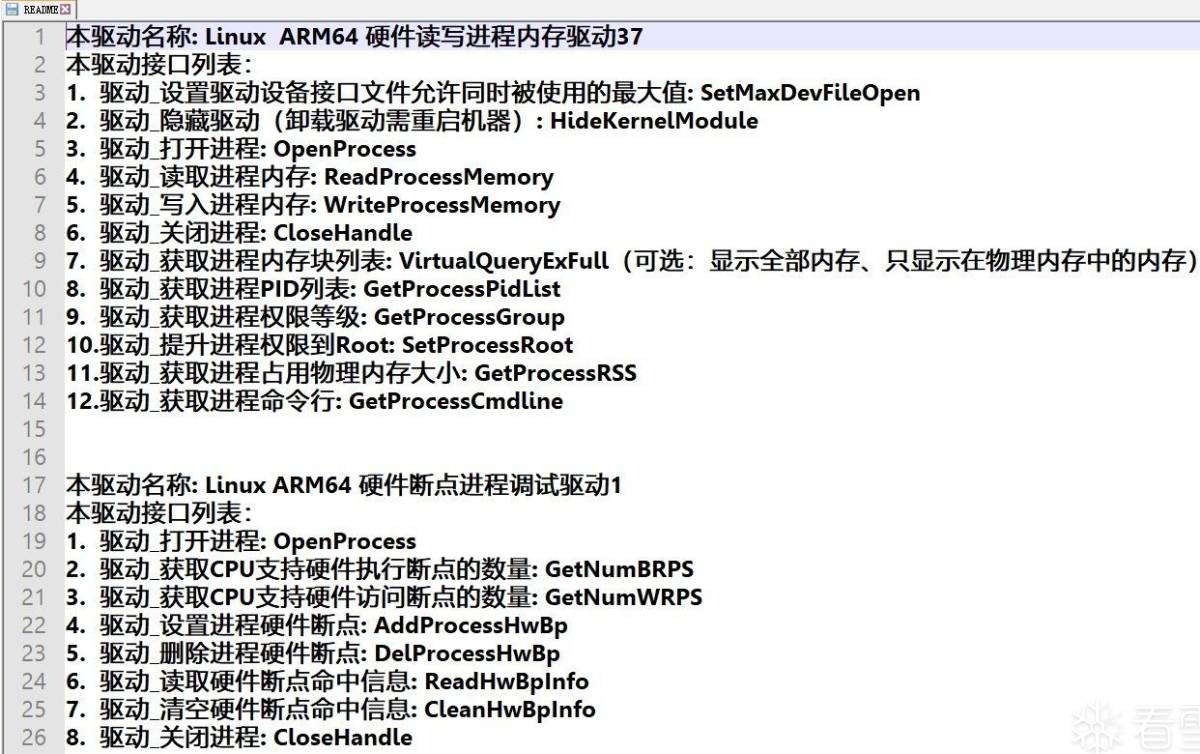

在Linux内核里,不区分进程与线程。统一按照线程来看待。那么每个线程都有一个对应的pid_t、pid、task_struct。

他们之间的关系是这样的:

pid_t <–> struct pid

nr为进程pid数值

看完以上的逻辑。大家是不是柳暗花明又一村,心里开朗了许多,他们之间是可以相互转换的。通过进程pid_t可以拿到pid,通过pid可以拿到task_struct。又可以反过来通过task_struct拿到进程pid。

驱动源码是使用put_pid将进程pid*的使用次数减去1

在Linux内核源码/kernel/pid.c下可以看到

这里采用读、写物理内存的思路,因为这样简洁明了,不需要理会其他反调试干扰。

首先根据pid*用get_pid_task取出task_struct。再用get_task_mm取出mm_struct结构。因为这个结构包含了进程的内存信息。首先检查内存是否可读if (vma->vm_flags & VM_READ)

如果可读。那么开始计算物理内存地址位置。由于Linux内核默认开启MMU机制,所以只能以页为单位计算物理内存地址。计算物理内存地址的方法有很多,这里提供三种思路:

第一种是使用get_user_pages,

知道了物理内存地址后,读、写物理内存地址,其实Linux内核里面也有演示,即drivers/char/mem.c。写的非常详细。最后还要注意MMU机制的离散内存,即buffer不连续问题,通俗的说就是不要一下子读太多,读到另一页去了,要分开页来读

这个接口就很简单了,通过task_struct取出mm_struct,接下来在mm_struct中遍历取出vma。详情可以参考代码fs\proc\task_mmu.c

mm_struct结构体里面有个arg_start变量,储存的地址值即是进程命令行。

但这里有个要注意的地方,经过我多台设备测试发现,并不是每个Linux内核系统的arg_start变量偏移值是一样的,这样子就会非常危险,一旦读错就会死机,而且原因还不好查找。

驱动里写入了两种方法,第一种是遍历/proc/pid目录,第二种是遍历task_struct结构体。这里有个要注意的地方,经过我多台设备测试发现,并不是每个Linux内核系统的task_struct结构体里的tasks变量偏移值是一样的,但具体玄学修正方法我还没时间进行编写,待有空再补充。

读取task_struct结构体里的mm_struct,再读取rss_stat就会有进程的物理内存占用大小,这个来源与/proc/pid/status里的源码编写。这里同样需要玄学技巧修正变量的偏移值,具体方法我已编写在内。

在取得task_struct进程结构后,观察头文件可以发现里面有两个变量值,一个是real_cred,另一个是cred,其实很简单,将两个cred里面的uid、gid、euid、egid、fsuid、fsgid修改成0即可

real_cred指向主体和真实客体证书,cred指向有效客体证书。通常情况下,cred和real_cred指向相同的证书,但是cred可以被临时改变

同样需要注意,每个Linux内核的cred结构变量偏移值并不是一样的,读错会死机,同理,我也使用了玄学的技巧,驱动能智能修正Linux内核变量的偏移值,能准确的识别出每个Linux内核版本里real_cred与cred的正确偏移位置

Github跳转

首先,编译此源码需要一定的技巧,再者,手机厂商本身已设置多重障碍用来阻止第三方驱动的加载,如果您需要加载此驱动,则需要将内核中的一些限制给去除。(其实这些验证都可以用IDA暴力Patch之~)。

本源码不针对任何程序,仅供交流、学习、调试Linux内核程序的用途,禁止用于任何非法用途,调试器是一把双刃剑,希望大家能营造一个良好的Linux内核Rootkit技术的交流环境。

后面即将开源:

“不需要源码,强制暴力修改手机Linux内核、去除加载内核驱动的所有验证”

“不需要源码,强制加载启动ko驱动文件、跨Linux内核版本、跨设备启动ko驱动模块文件”

“不需要源码,Linux内核进程万能调试器-可过所有的反调试机制-含硬件断点:Linux天下调,让天下没有难调试的Linux进程!”

“不需要源码,突破Linux内核Elf结构限制、将ollvm混淆加入到ko驱动文件中,增加驱动逆向难度”

list_del_init(&__this_module.list);

kobject_del(&THIS_MODULE->mkobj.kobj);

list_del_init(&__this_module.list);

kobject_del(&THIS_MODULE->mkobj.kobj);

pid_t pid_vnr(struct pid *pid)

{

return pid_nr_ns(pid, current->nsproxy->pid_ns);

}

EXPORT_SYMBOL_GPL(pid_vnr);

struct pid *find_pid_ns(int nr, struct pid_namespace *ns);

EXPORT_SYMBOL_GPL(find_pid_ns);

struct pid *find_vpid(int nr)

{

return find_pid_ns(nr, current->nsproxy->pid_ns);

}

EXPORT_SYMBOL_GPL(find_vpid);

struct pid *find_get_pid(int nr)

{

struct pid *pid;

rcu_read_lock();

pid = get_pid(find_vpid(nr));

rcu_read_unlock();

return pid;

}

EXPORT_SYMBOL_GPL(find_get_pid);

void put_pid(struct pid *pid);

EXPORT_SYMBOL_GPL(put_pid);

struct pid * –> struct task_struct *

struct pid *get_task_pid(sturct task_struct *task, enum pid_type);

EXPORT_SYMBOL_GPL(get_task_pid);

struct task_struct *pid_task(struct pid *pid, enum pid_type);

EXPORT_SYMBOL(pid_task);

struct task_struct *get_pid_task(struct pid *pid, enum pid_type)

{

struct task_struct *result;

rcu_read_lock();

result = pid_task(pid, type);

if (result)

get_task_struct(result);

rcu_read_unlock();

return result;

}

EXPORT_SYMBOL(get_pid_task);

static inline void put_task_struct(struct task_struct *t)

{

if (atomic_dec_and_test(&t->usage))

__put_task_struct(t);

}

void __put_task_struct(struct task_struct *t);

EXPORT_SYMBOL_GPL(__put_task_struct);

pid_t pid_vnr(struct pid *pid)

{

return pid_nr_ns(pid, current->nsproxy->pid_ns);

}

EXPORT_SYMBOL_GPL(pid_vnr);

struct pid *find_pid_ns(int nr, struct pid_namespace *ns);

EXPORT_SYMBOL_GPL(find_pid_ns);

struct pid *find_vpid(int nr)

{

return find_pid_ns(nr, current->nsproxy->pid_ns);

}

EXPORT_SYMBOL_GPL(find_vpid);

struct pid *find_get_pid(int nr)

{

struct pid *pid;

rcu_read_lock();

pid = get_pid(find_vpid(nr));

rcu_read_unlock();

return pid;

}

EXPORT_SYMBOL_GPL(find_get_pid);

void put_pid(struct pid *pid);

EXPORT_SYMBOL_GPL(put_pid);

struct pid * –> struct task_struct *

struct pid *get_task_pid(sturct task_struct *task, enum pid_type);

EXPORT_SYMBOL_GPL(get_task_pid);

struct task_struct *pid_task(struct pid *pid, enum pid_type);

EXPORT_SYMBOL(pid_task);

struct task_struct *get_pid_task(struct pid *pid, enum pid_type)

{

struct task_struct *result;

rcu_read_lock();

result = pid_task(pid, type);

if (result)

get_task_struct(result);

rcu_read_unlock();

return result;

}

EXPORT_SYMBOL(get_pid_task);

[培训]《冰与火的战歌:Windows内核攻防实战》!从零到实战,融合AI与Windows内核攻防全技术栈,打造具备自动化能力的内核开发高手。

最后于 2021-4-3 01:42

被abcz316编辑

,原因: 补充图片