-

-

[分享] VT-x学习(SimpleVisor)

-

发表于: 2026-3-4 18:47 1633

-

前言

上篇博客介绍了一些vt-x框架, 笔者最终选择SimpleVisor入门学习, 此次学习目的为以最小化版本走通vt-x流程, 不涉及 MSR bitmap 以及 EPT (后续会继续添加)

前置知识

KeGenericCallDpc: 在所有 CPU 上同时执行一个 DPC 回调函数

/// <summary>

/// 未导出的函数, 让每个cpu都执行Routine

/// </summary>

/// <param name="Routine">回调函数</param>

/// <param name="Context"></param>

/// <returns></returns>

NTKERNELAPI

_IRQL_requires_max_(APC_LEVEL)

_IRQL_requires_min_(PASSIVE_LEVEL)

_IRQL_requires_same_

VOID

KeGenericCallDpc (

_In_ PKDEFERRED_ROUTINE Routine,

_In_opt_ PVOID Context

);

KeSignalCallDpcDone: 告诉系统当前 CPU 的 DPC 工作已经完成

//通知执行完成

NTKERNELAPI

_IRQL_requires_(DISPATCH_LEVEL)

_IRQL_requires_same_

VOID

KeSignalCallDpcDone (

_In_ PVOID SystemArgument1

);

KeSignalCallDpcSynchronize: 在多核 DPC 执行过程中做同步屏障

//同步

NTKERNELAPI

_IRQL_requires_(DISPATCH_LEVEL)

_IRQL_requires_same_

LOGICAL

KeSignalCallDpcSynchronize (

_In_ PVOID SystemArgument2

);

第一步: 检测CPU是否支持VT

检测方法是固定的, 为

- 检测 cpuid 扩展特性字段第5位是否为1

- 检测 IA32_FEATURE_CONTROL_MSR 寄存器的第0位和第2位是否

- 检测 CR4 Bit 13 — VMXE 是否为1

UINT8

ShvVmxProbe(

VOID

)

{

INT32 cpu_info[4];

UINT64 featureControl;

INT32 isSeccesful = 1;

// Check the Hypervisor Present-bit

// eax ebx ecx edx

__cpuid(cpu_info, 1);

// 检查ecx第5位是否为1

ULONG cpuidState = 1;

if ((cpu_info[2] & 0x20) == FALSE)

{

cpuidState = 0;

//return FALSE;

}

// Check if the Feature Control MSR is locked. If it isn't, this means that

// BIOS/UEFI firmware screwed up, and we could go around locking it, but

// we'd rather not mess with it.

featureControl = __readmsr(IA32_FEATURE_CONTROL_MSR);

//判断0位(lock位)是否为1

ULONG msrState1 = 1;

if (!(featureControl & IA32_FEATURE_CONTROL_MSR_LOCK))

{

msrState1 = 0;

//return FALSE;

}

// The Feature Control MSR is locked-in (valid). Is VMX enabled in normal

// operation mode?

// 判断2位是否位1

ULONG msrState2 = 1;

if (!(featureControl & IA32_FEATURE_CONTROL_MSR_ENABLE_VMXON_OUTSIDE_SMX))

{

msrState2 = 0;

//return FALSE;

}

ULONG64 cr4 = __readcr4();

cr4 = cr4 >> 13;

ULONG cr4State = 1;

if (cr4 & 1)

{

cr4State = 0;

//return FALSE;

}

// Both the hardware and the firmware are allowing us to enter VMX mode.

// 硬件和固件都支持进入 VMX 模式, 返回成功

return isSeccesful;

}

第二步: 为 VmxOn Vmcs 等必要结构分配空间

PSHV_VP_DATA

ShvVpAllocateData(

_In_ UINT32 CpuCount

)

{

PSHV_VP_DATA data;

// Allocate a contiguous chunk of RAM to back this allocation

// 分配内存

data = ShvOsAllocateContigousAlignedMemory(sizeof(*data) * CpuCount);

if (data != NULL)

{

// Zero out the entire data region

// 数据区初始化

__stosq((UINT64*)data, 0, (sizeof(*data) / sizeof(UINT64)) * CpuCount);

}

// Return what is hopefully a valid pointer, otherwise NULL.

return data;

}

其中 PSHV_VP_DATA 中包含了所需的 VmxOn Vmcs MsrBitmap 等等

typedef struct _SHV_VP_DATA

{

union

{

DECLSPEC_ALIGN(PAGE_SIZE) UINT8 ShvStackLimit[KERNEL_STACK_SIZE];

struct

{

SHV_SPECIAL_REGISTERS SpecialRegisters;

CONTEXT ContextFrame;

UINT64 SystemDirectoryTableBase;

LARGE_INTEGER MsrData[17];

SHV_MTRR_RANGE MtrrData[16];

UINT64 VmxOnPhysicalAddress;

UINT64 VmcsPhysicalAddress;

UINT64 MsrBitmapPhysicalAddress;

UINT64 EptPml4PhysicalAddress;

UINT32 EptControls;

};

};

DECLSPEC_ALIGN(PAGE_SIZE) UINT8 MsrBitmap[PAGE_SIZE];

DECLSPEC_ALIGN(PAGE_SIZE) VMX_EPML4E Epml4[PML4E_ENTRY_COUNT];

DECLSPEC_ALIGN(PAGE_SIZE) VMX_PDPTE Epdpt[PDPTE_ENTRY_COUNT];

DECLSPEC_ALIGN(PAGE_SIZE) VMX_LARGE_PDE Epde[PDPTE_ENTRY_COUNT][PDE_ENTRY_COUNT];

DECLSPEC_ALIGN(PAGE_SIZE) VMX_VMCS VmxOn;

DECLSPEC_ALIGN(PAGE_SIZE) VMX_VMCS Vmcs;

} SHV_VP_DATA, * PSHV_VP_DATA;

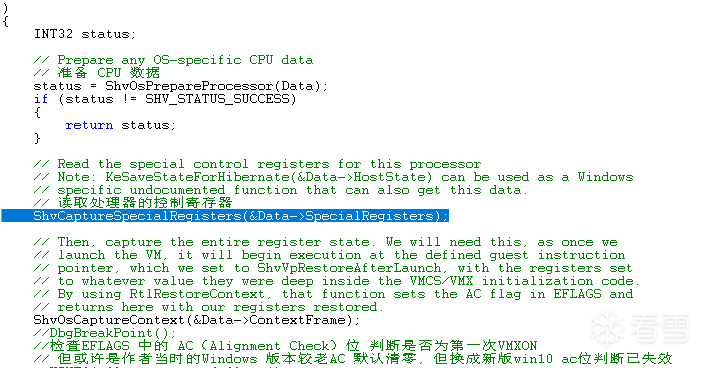

第三步: 预备工作以及vmxon|vmclear|vmptrld

读取控制寄存器

// Read the special control registers for this processor

// Note: KeSaveStateForHibernate(&Data->HostState) can be used as a Windows

// specific undocumented function that can also get this data.

ShvCaptureSpecialRegisters(&Data->SpecialRegisters);

获取CONTEXT环境

// Then, capture the entire register state. We will need this, as once we

// launch the VM, it will begin execution at the defined guest instruction

// pointer, which we set to ShvVpRestoreAfterLaunch, with the registers set

// to whatever value they were deep inside the VMCS/VMX initialization code.

// By using RtlRestoreContext, that function sets the AC flag in EFLAGS and

// returns here with our registers restored.

ShvOsCaptureContext(&Data->ContextFrame);

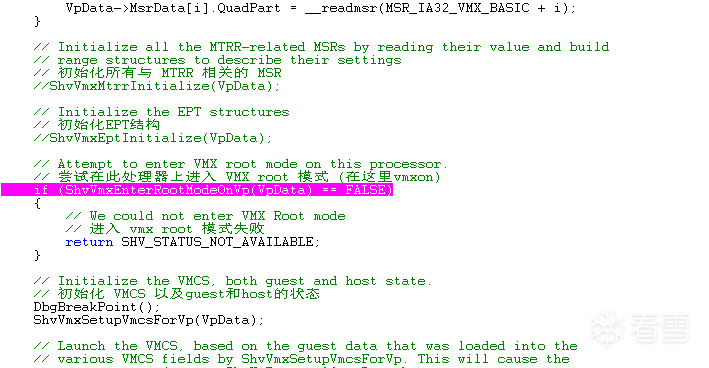

初始化 vmxon|vmclear|vmptrld

UINT8

ShvVmxEnterRootModeOnVp(

_In_ PSHV_VP_DATA VpData

)

{

PSHV_SPECIAL_REGISTERS Registers = &VpData->SpecialRegisters;

// Ensure the the VMCS can fit into a single page

if (((VpData->MsrData[0].QuadPart & VMX_BASIC_VMCS_SIZE_MASK) >> 32) > PAGE_SIZE)

{

return FALSE;

}

// Ensure that the VMCS is supported in writeback memory

if (((VpData->MsrData[0].QuadPart & VMX_BASIC_MEMORY_TYPE_MASK) >> 50) != MTRR_TYPE_WB)

{

return FALSE;

}

// Ensure that true MSRs can be used for capabilities

if (((VpData->MsrData[0].QuadPart) & VMX_BASIC_DEFAULT1_ZERO) == 0)

{

return FALSE;

}

// Ensure that EPT is available with the needed features SimpleVisor uses

if (((VpData->MsrData[12].QuadPart & VMX_EPT_PAGE_WALK_4_BIT) != 0) &&

((VpData->MsrData[12].QuadPart & VMX_EPTP_WB_BIT) != 0) &&

((VpData->MsrData[12].QuadPart & VMX_EPT_2MB_PAGE_BIT) != 0))

{

// Enable EPT if these features are supported

VpData->EptControls = SECONDARY_EXEC_ENABLE_EPT | SECONDARY_EXEC_ENABLE_VPID;

}

// Capture the revision ID for the VMXON and VMCS region

VpData->VmxOn.RevisionId = VpData->MsrData[0].LowPart;

VpData->Vmcs.RevisionId = VpData->MsrData[0].LowPart;

// Store the physical addresses of all per-LP structures allocated

VpData->VmxOnPhysicalAddress = ShvOsGetPhysicalAddress(&VpData->VmxOn);

VpData->VmcsPhysicalAddress = ShvOsGetPhysicalAddress(&VpData->Vmcs);

VpData->MsrBitmapPhysicalAddress = ShvOsGetPhysicalAddress(VpData->MsrBitmap);

VpData->EptPml4PhysicalAddress = ShvOsGetPhysicalAddress(&VpData->Epml4);

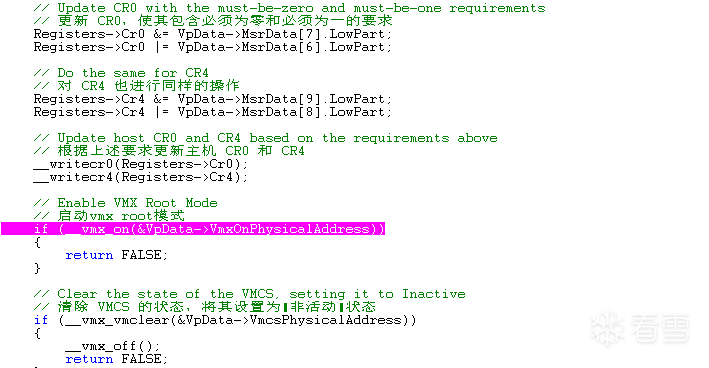

// Update CR0 with the must-be-zero and must-be-one requirements

Registers->Cr0 &= VpData->MsrData[7].LowPart;

Registers->Cr0 |= VpData->MsrData[6].LowPart;

// Do the same for CR4

Registers->Cr4 &= VpData->MsrData[9].LowPart;

Registers->Cr4 |= VpData->MsrData[8].LowPart;

// Update host CR0 and CR4 based on the requirements above

__writecr0(Registers->Cr0);

__writecr4(Registers->Cr4);

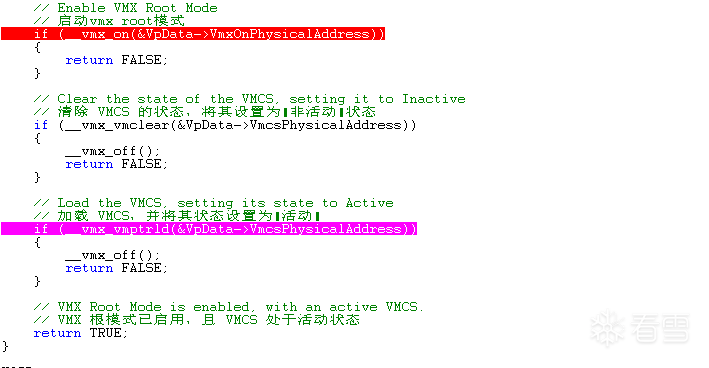

// Enable VMX Root Mode

// 进入vmxon

if (__vmx_on(&VpData->VmxOnPhysicalAddress))

{

return FALSE;

}

// Clear the state of the VMCS, setting it to Inactive

if (__vmx_vmclear(&VpData->VmcsPhysicalAddress))

{

__vmx_off();

return FALSE;

}

// Load the VMCS, setting its state to Active

if (__vmx_vmptrld(&VpData->VmcsPhysicalAddress))

{

__vmx_off();

return FALSE;

}

// VMX Root Mode is enabled, with an active VMCS.

return TRUE;

}

第四步: 初始化 VMCS 以及guest和host的状态

VOID

ShvVmxSetupVmcsForVp(

_In_ PSHV_VP_DATA VpData

)

{

PSHV_SPECIAL_REGISTERS state = &VpData->SpecialRegisters;

PCONTEXT context = &VpData->ContextFrame;

VMX_GDTENTRY64 vmxGdtEntry;

VMX_EPTP vmxEptp;

//

// Begin by setting the link pointer to the required value for 4KB VMCS.

//

__vmx_vmwrite(VMCS_LINK_POINTER, ~0ULL);

//

// Enable no pin-based options ourselves, but there may be some required by

// the processor. Use ShvUtilAdjustMsr to add those in.

//

__vmx_vmwrite(PIN_BASED_VM_EXEC_CONTROL,

ShvUtilAdjustMsr(VpData->MsrData[13], 0));

//

// In order for our choice of supporting RDTSCP and XSAVE/RESTORES above to

// actually mean something, we have to request secondary controls. We also

// want to activate the MSR bitmap in order to keep them from being caught.

//

__vmx_vmwrite(CPU_BASED_VM_EXEC_CONTROL,

ShvUtilAdjustMsr(VpData->MsrData[14],

CPU_BASED_ACTIVATE_MSR_BITMAP |

CPU_BASED_ACTIVATE_SECONDARY_CONTROLS));

//

// Make sure to enter us in x64 mode at all times.

//

__vmx_vmwrite(VM_EXIT_CONTROLS,

ShvUtilAdjustMsr(VpData->MsrData[15],

VM_EXIT_IA32E_MODE));

//

// As we exit back into the guest, make sure to exist in x64 mode as well.

//

__vmx_vmwrite(VM_ENTRY_CONTROLS,

ShvUtilAdjustMsr(VpData->MsrData[16],

VM_ENTRY_IA32E_MODE));

//

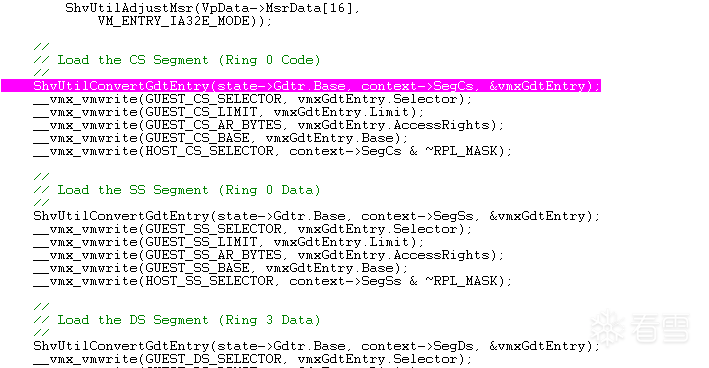

// Load the CS Segment (Ring 0 Code)

//

ShvUtilConvertGdtEntry(state->Gdtr.Base, context->SegCs, &vmxGdtEntry);

__vmx_vmwrite(GUEST_CS_SELECTOR, vmxGdtEntry.Selector);

__vmx_vmwrite(GUEST_CS_LIMIT, vmxGdtEntry.Limit);

__vmx_vmwrite(GUEST_CS_AR_BYTES, vmxGdtEntry.AccessRights);

__vmx_vmwrite(GUEST_CS_BASE, vmxGdtEntry.Base);

__vmx_vmwrite(HOST_CS_SELECTOR, context->SegCs & ~RPL_MASK);

//

// Load the SS Segment (Ring 0 Data)

//

ShvUtilConvertGdtEntry(state->Gdtr.Base, context->SegSs, &vmxGdtEntry);

__vmx_vmwrite(GUEST_SS_SELECTOR, vmxGdtEntry.Selector);

__vmx_vmwrite(GUEST_SS_LIMIT, vmxGdtEntry.Limit);

__vmx_vmwrite(GUEST_SS_AR_BYTES, vmxGdtEntry.AccessRights);

__vmx_vmwrite(GUEST_SS_BASE, vmxGdtEntry.Base);

__vmx_vmwrite(HOST_SS_SELECTOR, context->SegSs & ~RPL_MASK);

//

// Load the DS Segment (Ring 3 Data)

//

ShvUtilConvertGdtEntry(state->Gdtr.Base, context->SegDs, &vmxGdtEntry);

__vmx_vmwrite(GUEST_DS_SELECTOR, vmxGdtEntry.Selector);

__vmx_vmwrite(GUEST_DS_LIMIT, vmxGdtEntry.Limit);

__vmx_vmwrite(GUEST_DS_AR_BYTES, vmxGdtEntry.AccessRights);

__vmx_vmwrite(GUEST_DS_BASE, vmxGdtEntry.Base);

__vmx_vmwrite(HOST_DS_SELECTOR, context->SegDs & ~RPL_MASK);

//

// Load the ES Segment (Ring 3 Data)

//

ShvUtilConvertGdtEntry(state->Gdtr.Base, context->SegEs, &vmxGdtEntry);

__vmx_vmwrite(GUEST_ES_SELECTOR, vmxGdtEntry.Selector);

__vmx_vmwrite(GUEST_ES_LIMIT, vmxGdtEntry.Limit);

__vmx_vmwrite(GUEST_ES_AR_BYTES, vmxGdtEntry.AccessRights);

__vmx_vmwrite(GUEST_ES_BASE, vmxGdtEntry.Base);

__vmx_vmwrite(HOST_ES_SELECTOR, context->SegEs & ~RPL_MASK);

//

// Load the FS Segment (Ring 3 Compatibility-Mode TEB)

//

ShvUtilConvertGdtEntry(state->Gdtr.Base, context->SegFs, &vmxGdtEntry);

__vmx_vmwrite(GUEST_FS_SELECTOR, vmxGdtEntry.Selector);

__vmx_vmwrite(GUEST_FS_LIMIT, vmxGdtEntry.Limit);

__vmx_vmwrite(GUEST_FS_AR_BYTES, vmxGdtEntry.AccessRights);

__vmx_vmwrite(GUEST_FS_BASE, vmxGdtEntry.Base);

__vmx_vmwrite(HOST_FS_BASE, vmxGdtEntry.Base);

__vmx_vmwrite(HOST_FS_SELECTOR, context->SegFs & ~RPL_MASK);

//

// Load the GS Segment (Ring 3 Data if in Compatibility-Mode, MSR-based in Long Mode)

//

ShvUtilConvertGdtEntry(state->Gdtr.Base, context->SegGs, &vmxGdtEntry);

__vmx_vmwrite(GUEST_GS_SELECTOR, vmxGdtEntry.Selector);

__vmx_vmwrite(GUEST_GS_LIMIT, vmxGdtEntry.Limit);

__vmx_vmwrite(GUEST_GS_AR_BYTES, vmxGdtEntry.AccessRights);

__vmx_vmwrite(GUEST_GS_BASE, state->MsrGsBase);

__vmx_vmwrite(HOST_GS_BASE, state->MsrGsBase);

__vmx_vmwrite(HOST_GS_SELECTOR, context->SegGs & ~RPL_MASK);

//

// Load the Task Register (Ring 0 TSS)

//

ShvUtilConvertGdtEntry(state->Gdtr.Base, state->Tr, &vmxGdtEntry);

__vmx_vmwrite(GUEST_TR_SELECTOR, vmxGdtEntry.Selector);

__vmx_vmwrite(GUEST_TR_LIMIT, vmxGdtEntry.Limit);

__vmx_vmwrite(GUEST_TR_AR_BYTES, vmxGdtEntry.AccessRights);

__vmx_vmwrite(GUEST_TR_BASE, vmxGdtEntry.Base);

__vmx_vmwrite(HOST_TR_BASE, vmxGdtEntry.Base);

__vmx_vmwrite(HOST_TR_SELECTOR, state->Tr & ~RPL_MASK);

//

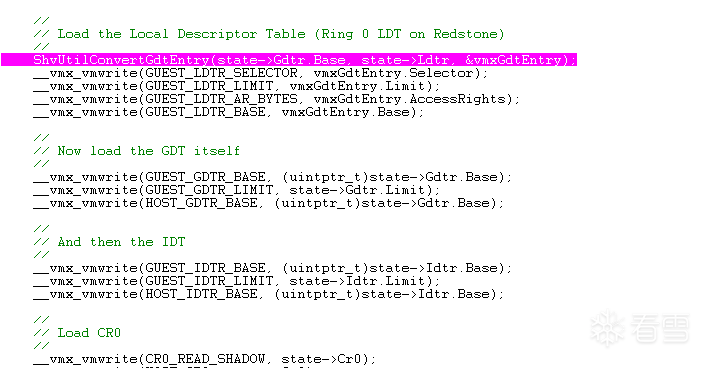

// Load the Local Descriptor Table (Ring 0 LDT on Redstone)

//

ShvUtilConvertGdtEntry(state->Gdtr.Base, state->Ldtr, &vmxGdtEntry);

__vmx_vmwrite(GUEST_LDTR_SELECTOR, vmxGdtEntry.Selector);

__vmx_vmwrite(GUEST_LDTR_LIMIT, vmxGdtEntry.Limit);

__vmx_vmwrite(GUEST_LDTR_AR_BYTES, vmxGdtEntry.AccessRights);

__vmx_vmwrite(GUEST_LDTR_BASE, vmxGdtEntry.Base);

//

// Now load the GDT itself

//

__vmx_vmwrite(GUEST_GDTR_BASE, (uintptr_t)state->Gdtr.Base);

__vmx_vmwrite(GUEST_GDTR_LIMIT, state->Gdtr.Limit);

__vmx_vmwrite(HOST_GDTR_BASE, (uintptr_t)state->Gdtr.Base);

//

// And then the IDT

//

__vmx_vmwrite(GUEST_IDTR_BASE, (uintptr_t)state->Idtr.Base);

__vmx_vmwrite(GUEST_IDTR_LIMIT, state->Idtr.Limit);

__vmx_vmwrite(HOST_IDTR_BASE, (uintptr_t)state->Idtr.Base);

//

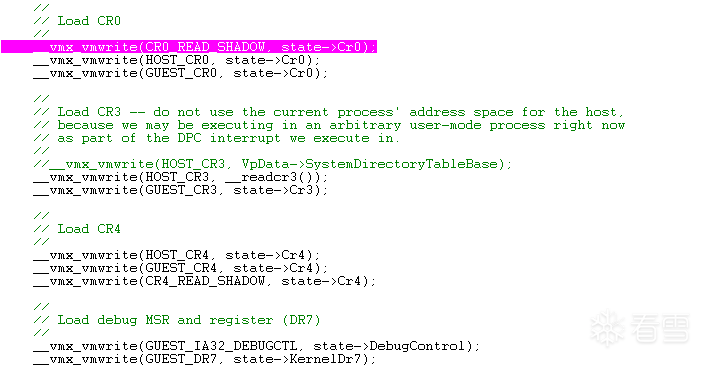

// Load CR0

//

__vmx_vmwrite(CR0_READ_SHADOW, state->Cr0);

__vmx_vmwrite(HOST_CR0, state->Cr0);

__vmx_vmwrite(GUEST_CR0, state->Cr0);

//

// Load CR3 -- do not use the current process' address space for the host,

// because we may be executing in an arbitrary user-mode process right now

// as part of the DPC interrupt we execute in.

//

//__vmx_vmwrite(HOST_CR3, VpData->SystemDirectoryTableBase);

__vmx_vmwrite(HOST_CR3, __readcr3());

__vmx_vmwrite(GUEST_CR3, state->Cr3);

//

// Load CR4

//

__vmx_vmwrite(HOST_CR4, state->Cr4);

__vmx_vmwrite(GUEST_CR4, state->Cr4);

__vmx_vmwrite(CR4_READ_SHADOW, state->Cr4);

//

// Load debug MSR and register (DR7)

//

__vmx_vmwrite(GUEST_IA32_DEBUGCTL, state->DebugControl);

__vmx_vmwrite(GUEST_DR7, state->KernelDr7);

//

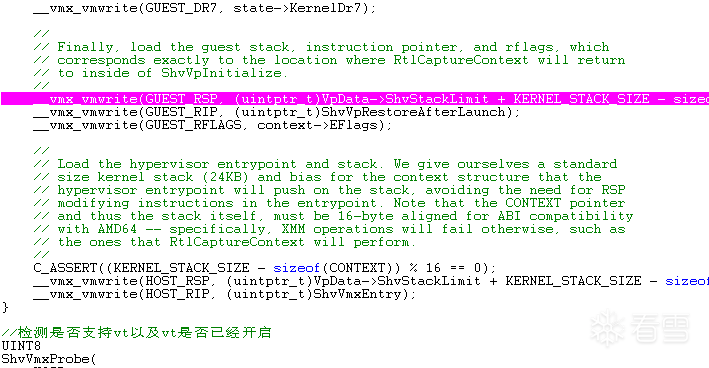

// Finally, load the guest stack, instruction pointer, and rflags, which

// corresponds exactly to the location where RtlCaptureContext will return

// to inside of ShvVpInitialize.

//

__vmx_vmwrite(GUEST_RSP, (uintptr_t)VpData->ShvStackLimit + KERNEL_STACK_SIZE - sizeof(CONTEXT));

__vmx_vmwrite(GUEST_RIP, (uintptr_t)ShvVpRestoreAfterLaunch);

__vmx_vmwrite(GUEST_RFLAGS, context->EFlags);

//

// Load the hypervisor entrypoint and stack. We give ourselves a standard

// size kernel stack (24KB) and bias for the context structure that the

// hypervisor entrypoint will push on the stack, avoiding the need for RSP

// modifying instructions in the entrypoint. Note that the CONTEXT pointer

// and thus the stack itself, must be 16-byte aligned for ABI compatibility

// with AMD64 -- specifically, XMM operations will fail otherwise, such as

// the ones that RtlCaptureContext will perform.

//

C_ASSERT((KERNEL_STACK_SIZE - sizeof(CONTEXT)) % 16 == 0);

__vmx_vmwrite(HOST_RSP, (uintptr_t)VpData->ShvStackLimit + KERNEL_STACK_SIZE - sizeof(CONTEXT));

__vmx_vmwrite(HOST_RIP, (uintptr_t)ShvVmxEntry);

}

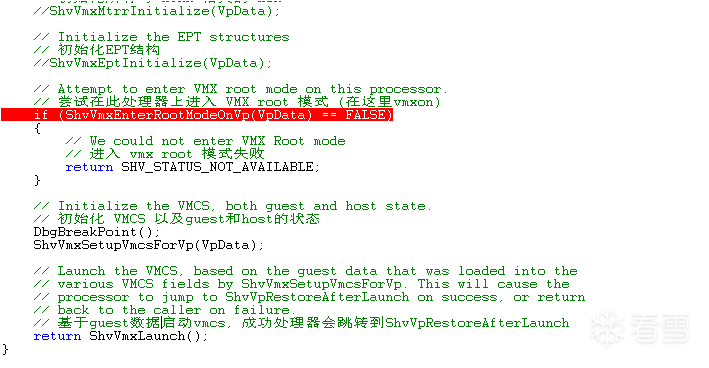

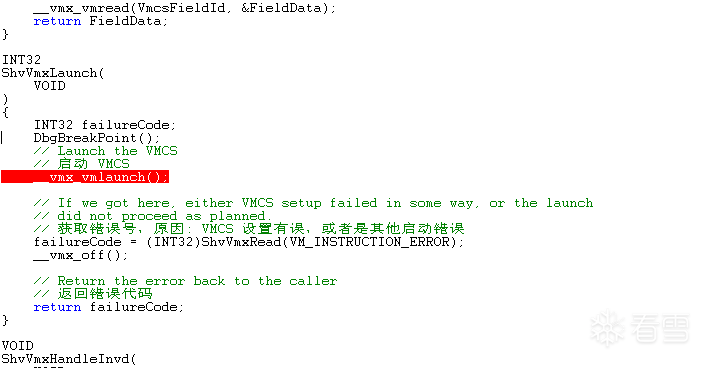

第五步: vmlaunch以及跳转位置

INT32 failureCode;

DbgBreakPoint();

// Launch the VMCS

// 启动 VMCS

__vmx_vmlaunch();

// If we got here, either VMCS setup failed in some way, or the launch

// did not proceed as planned.

// 获取错误号,原因: VMCS 设置有误,或者是其他启动错误

failureCode = (INT32)ShvVmxRead(VM_INSTRUCTION_ERROR);

__vmx_off();

// Return the error back to the caller

return failureCode;

注意: 执行完__vmx_vmlaunch如若成功将直接跳转到guestRIP, 将不会继续执行__vmx_vmlaunch之后剩下的代码.

__vmx_vmwrite(GUEST_RSP, (uintptr_t)VpData->ShvStackLimit + KERNEL_STACK_SIZE - sizeof(CONTEXT));

__vmx_vmwrite(GUEST_RIP, (uintptr_t)ShvVpRestoreAfterLaunch);

__vmx_vmwrite(GUEST_RFLAGS, context->EFlags);

我们可以看到GUEST_RIP设置为ShvVpRestoreAfterLaunch

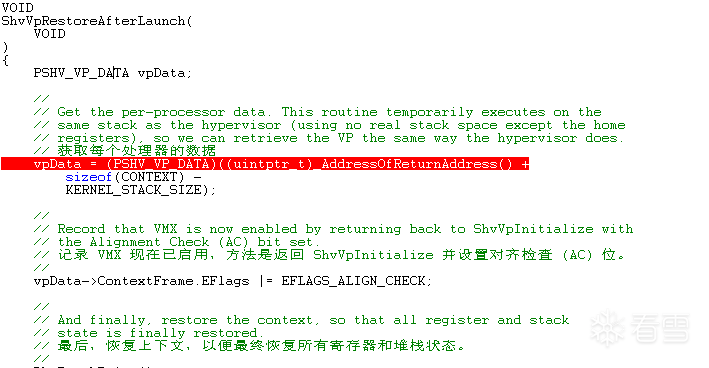

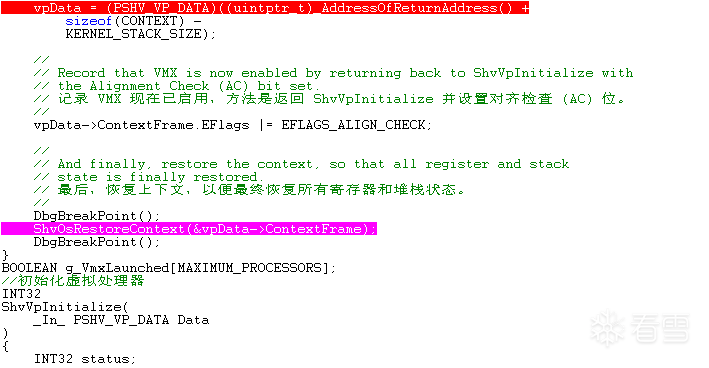

DECLSPEC_NORETURN

VOID

ShvVpRestoreAfterLaunch(

VOID

)

{

PSHV_VP_DATA vpData;

//

// Get the per-processor data. This routine temporarily executes on the

// same stack as the hypervisor (using no real stack space except the home

// registers), so we can retrieve the VP the same way the hypervisor does.

vpData = (PSHV_VP_DATA)((uintptr_t)_AddressOfReturnAddress() +

sizeof(CONTEXT) -

KERNEL_STACK_SIZE);

//

// Record that VMX is now enabled by returning back to ShvVpInitialize with

// the Alignment Check (AC) bit set.

//

vpData->ContextFrame.EFlags |= EFLAGS_ALIGN_CHECK;

//

// And finally, restore the context, so that all register and stack

// state is finally restored.

// 恢复所有寄存器和堆栈状态

//

ShvOsRestoreContext(&vpData->ContextFrame);

}

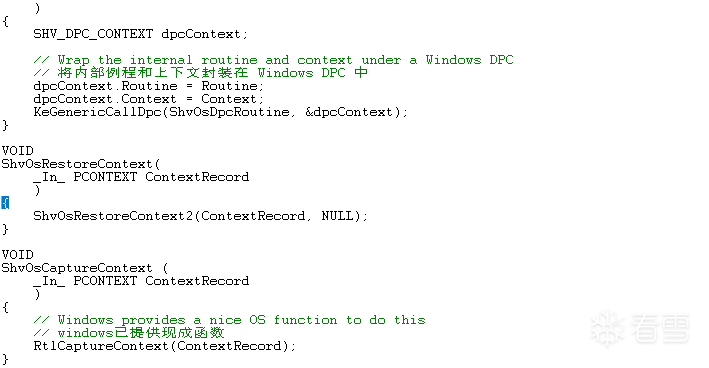

VOID

ShvOsRestoreContext(

_In_ PCONTEXT ContextRecord

)

{

ShvOsRestoreContext2(ContextRecord, NULL);

}

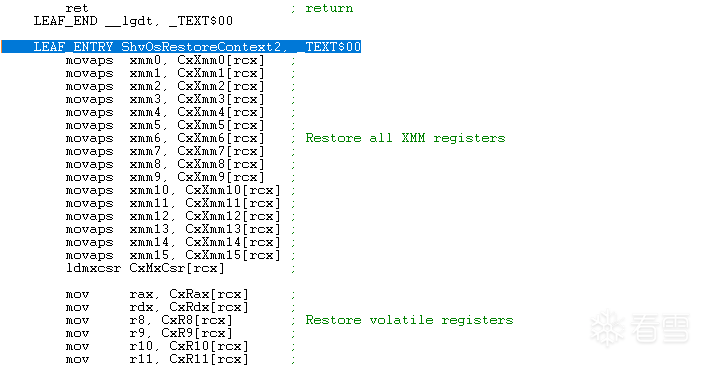

继续跟踪ShvOsRestoreContext2

LEAF_ENTRY ShvOsRestoreContext2, _TEXT$00

movaps xmm0, CxXmm0[rcx] ;

movaps xmm1, CxXmm1[rcx] ;

movaps xmm2, CxXmm2[rcx] ;

movaps xmm3, CxXmm3[rcx] ;

movaps xmm4, CxXmm4[rcx] ;

movaps xmm5, CxXmm5[rcx] ;

movaps xmm6, CxXmm6[rcx] ; Restore all XMM registers

movaps xmm7, CxXmm7[rcx] ;

movaps xmm8, CxXmm8[rcx] ;

movaps xmm9, CxXmm9[rcx] ;

movaps xmm10, CxXmm10[rcx] ;

movaps xmm11, CxXmm11[rcx] ;

movaps xmm12, CxXmm12[rcx] ;

movaps xmm13, CxXmm13[rcx] ;

movaps xmm14, CxXmm14[rcx] ;

movaps xmm15, CxXmm15[rcx] ;

ldmxcsr CxMxCsr[rcx] ;

mov rax, CxRax[rcx] ;

mov rdx, CxRdx[rcx] ;

mov r8, CxR8[rcx] ; Restore volatile registers

mov r9, CxR9[rcx] ;

mov r10, CxR10[rcx] ;

mov r11, CxR11[rcx] ;

mov rbx, CxRbx[rcx] ;

mov rsi, CxRsi[rcx] ;

mov rdi, CxRdi[rcx] ;

mov rbp, CxRbp[rcx] ; Restore non volatile regsiters

mov r12, CxR12[rcx] ;

mov r13, CxR13[rcx] ;

mov r14, CxR14[rcx] ;

mov r15, CxR15[rcx] ;

cli ; Disable interrupts

push CxEFlags[rcx] ; Push RFLAGS on stack

popfq ; Restore RFLAGS

mov rsp, CxRsp[rcx] ; Restore old stack

push CxRip[rcx] ; Push RIP on old stack

mov rcx, CxRcx[rcx] ; Restore RCX since we spilled it

ret ; Restore RIP

LEAF_END ShvOsRestoreContext2, _TEXT$00

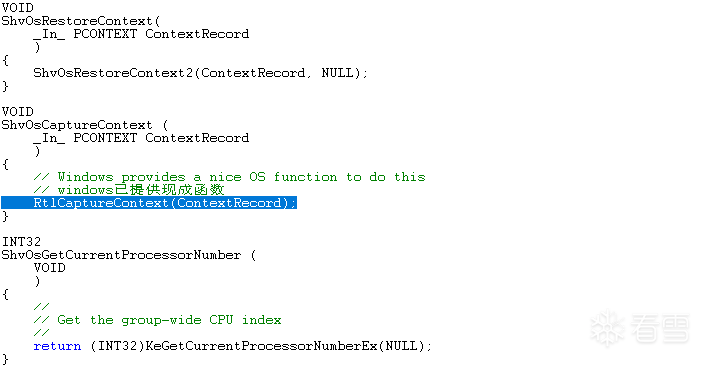

可以发现, 他还原了CxRip[rcx], 这里push进去就改变了ret的返回位置, 实际返回位置为ShvOsCaptureContext

VOID

ShvOsCaptureContext (

_In_ PCONTEXT ContextRecord

)

{

// Windows provides a nice OS function to do this

RtlCaptureContext(ContextRecord);

}

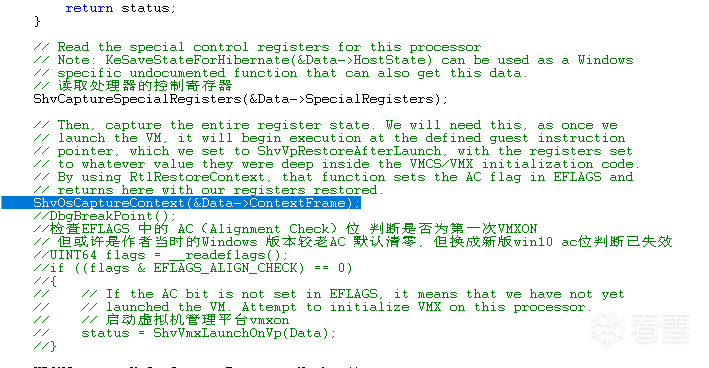

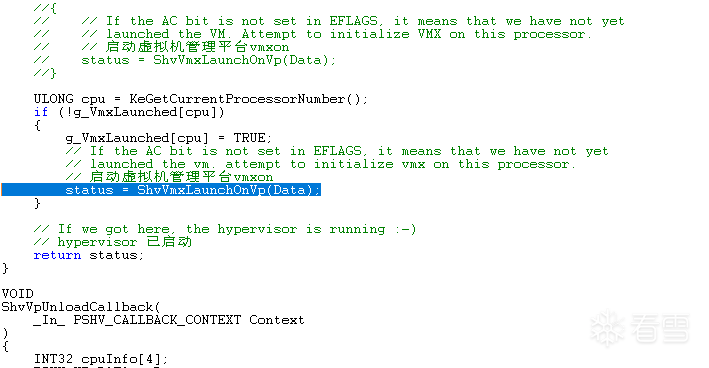

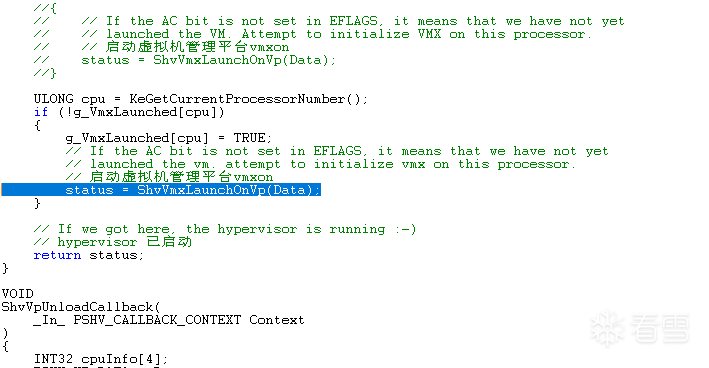

ShvOsCaptureContext出来继续返回就到了status = ShvVmxLaunchOnVp(Data);执行完之后的位置

// Then, capture the entire register state. We will need this, as once we

// launch the VM, it will begin execution at the defined guest instruction

// pointer, which we set to ShvVpRestoreAfterLaunch, with the registers set

// to whatever value they were deep inside the VMCS/VMX initialization code.

// By using RtlRestoreContext, that function sets the AC flag in EFLAGS and

// returns here with our registers restored.

ShvOsCaptureContext(&Data->ContextFrame);

ULONG cpu = KeGetCurrentProcessorNumber();

if (!g_VmxLaunched[cpu])

{

g_VmxLaunched[cpu] = TRUE;

// If the AC bit is not set in EFLAGS, it means that we have not yet

// launched the vm. attempt to initialize vmx on this processor.

// 启动虚拟机管理平台vmxon

status = ShvVmxLaunchOnVp(Data);

}

// If we got here, the hypervisor is running :-)

// hypervisor 已启动

return status;

继续返回就整个回调就结束了, 正常进入系统.

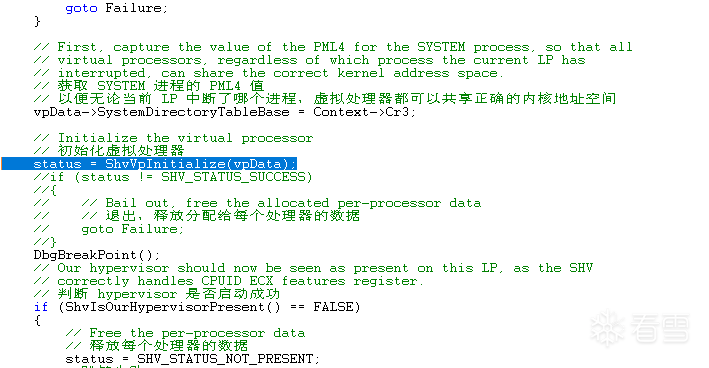

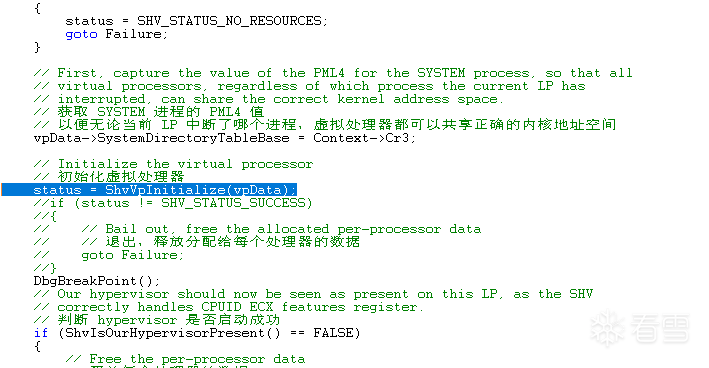

// Initialize the virtual processor

status = ShvVpInitialize(vpData);

if (status != SHV_STATUS_SUCCESS)

{

// Bail out, free the allocated per-processor data

goto Failure;

}

// Our hypervisor should now be seen as present on this LP, as the SHV

// correctly handles CPUID ECX features register.

if (ShvIsOurHypervisorPresent() == FALSE)

{

// Free the per-processor data

status = SHV_STATUS_NOT_PRESENT;

goto Failure;

}

// This CPU is hyperjacked!

_InterlockedIncrement((volatile long*)&Context->InitCount);

return;

补充:之前我们将HOST_RIP设置为ShvVmxEntry

//

// Load the hypervisor entrypoint and stack. We give ourselves a standard

// size kernel stack (24KB) and bias for the context structure that the

// hypervisor entrypoint will push on the stack, avoiding the need for RSP

// modifying instructions in the entrypoint. Note that the CONTEXT pointer

// and thus the stack itself, must be 16-byte aligned for ABI compatibility

// with AMD64 -- specifically, XMM operations will fail otherwise, such as

// the ones that RtlCaptureContext will perform.

//

C_ASSERT((KERNEL_STACK_SIZE - sizeof(CONTEXT)) % 16 == 0);

__vmx_vmwrite(HOST_RSP, (uintptr_t)VpData->ShvStackLimit + KERNEL_STACK_SIZE - sizeof(CONTEXT));

__vmx_vmwrite(HOST_RIP, (uintptr_t)ShvVmxEntry);

在ShvVmxEntry保存了context, 之后就跳转到ShvVmxEntryHandler

ShvVmxEntry PROC

push rcx ; save the RCX register, which we spill below

lea rcx, [rsp+8h] ; store the context in the stack, bias for

; the return address and the push we just did.

call ShvOsCaptureContext ; save the current register state.

; note that this is a specially written function

; which has the following key characteristics:

; 1) it does not taint the value of RCX

; 2) it does not spill any registers, nor

; expect home space to be allocated for it

jmp ShvVmxEntryHandler ; jump to the C code handler. we assume that it

; compiled with optimizations and does not use

; home space, which is true of release builds.

ShvVmxEntry ENDP

在ShvVmxEntryHandler中又会执行ShvVmxHandleExit

DECLSPEC_NORETURN

VOID

ShvVmxEntryHandler(

_In_ PCONTEXT Context

)

{

SHV_VP_STATE guestContext;

PSHV_VP_DATA vpData;

//

// Because we had to use RCX when calling ShvOsCaptureContext, its value

// was actually pushed on the stack right before the call. Go dig into the

// stack to find it, and overwrite the bogus value that's there now.

//

Context->Rcx = *(UINT64*)((uintptr_t)Context - sizeof(Context->Rcx));

//

// Get the per-VP data for this processor.

//

vpData = (VOID*)((uintptr_t)(Context + 1) - KERNEL_STACK_SIZE);

//

// Build a little stack context to make it easier to keep track of certain

// guest state, such as the RIP/RSP/RFLAGS, and the exit reason. The rest

// of the general purpose registers come from the context structure that we

// captured on our own with RtlCaptureContext in the assembly entrypoint.

//

guestContext.GuestEFlags = ShvVmxRead(GUEST_RFLAGS);

guestContext.GuestRip = ShvVmxRead(GUEST_RIP);

guestContext.GuestRsp = ShvVmxRead(GUEST_RSP);

guestContext.ExitReason = ShvVmxRead(VM_EXIT_REASON) & 0xFFFF;

guestContext.VpRegs = Context;

guestContext.ExitVm = FALSE;

//

// Call the generic handler

//

ShvVmxHandleExit(&guestContext);

//

// Did we hit the magic exit sequence, or should we resume back to the VM

// context?

//

if (guestContext.ExitVm != FALSE)

{

//

// Return the VP Data structure in RAX:RBX which is going to be part of

// the CPUID response that the caller (ShvVpUninitialize) expects back.

// Return confirmation in RCX that we are loaded

//

Context->Rax = (uintptr_t)vpData >> 32;

Context->Rbx = (uintptr_t)vpData & 0xFFFFFFFF;

Context->Rcx = 0x43434343;

//

// Perform any OS-specific CPU uninitialization work

//

ShvOsUnprepareProcessor(vpData);

//

// Our callback routine may have interrupted an arbitrary user process,

// and therefore not a thread running with a systemwide page directory.

// Therefore if we return back to the original caller after turning off

// VMX, it will keep our current "host" CR3 value which we set on entry

// to the PML4 of the SYSTEM process. We want to return back with the

// correct value of the "guest" CR3, so that the currently executing

// process continues to run with its expected address space mappings.

//

__writecr3(ShvVmxRead(GUEST_CR3));

//

// Finally, restore the stack, instruction pointer and EFLAGS to the

// original values present when the instruction causing our VM-Exit

// execute (such as ShvVpUninitialize). This will effectively act as

// a longjmp back to that location.

//

Context->Rsp = guestContext.GuestRsp;

Context->Rip = (UINT64)guestContext.GuestRip;

Context->EFlags = (UINT32)guestContext.GuestEFlags;

//

// Turn off VMX root mode on this logical processor. We're done here.

//

__vmx_off();

}

else

{

//

// Because we won't be returning back into assembly code, nothing will

// ever know about the "pop rcx" that must technically be done (or more

// accurately "add rsp, 4" as rcx will already be correct thanks to the

// fixup earlier. In order to keep the stack sane, do that adjustment

// here.

//

Context->Rsp += sizeof(Context->Rcx);

//

// Return into a VMXRESUME intrinsic, which we broke out as its own

// function, in order to allow this to work. No assembly code will be

// needed as RtlRestoreContext will fix all the GPRs, and what we just

// did to RSP will take care of the rest.

//

Context->Rip = (UINT64)ShvVmxResume;

}

//

// Restore the context to either ShvVmxResume, in which case the CPU's VMX

// facility will do the "true" return back to the VM (but without restoring

// GPRs, which is why we must do it here), or to the original guest's RIP,

// which we use in case an exit was requested. In this case VMX must now be

// off, and this will look like a longjmp to the original stack and RIP.

//

ShvOsRestoreContext(Context);

}

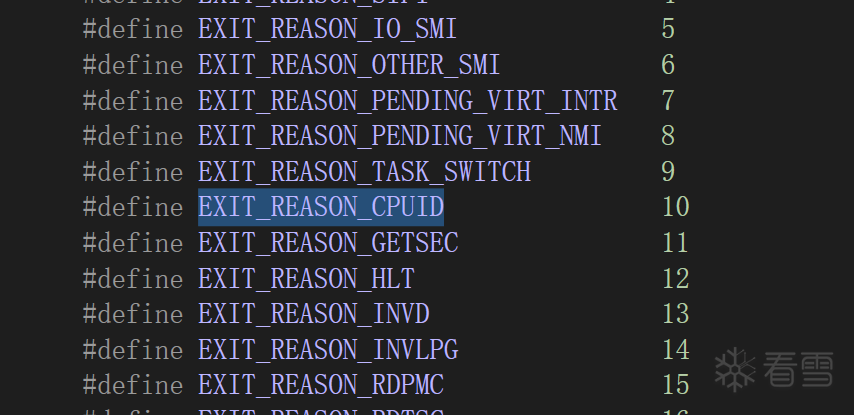

ShvVmxHandleExit就是处理reason逻辑的地方

VOID

ShvVmxHandleExit(

_In_ PSHV_VP_STATE VpState

)

{

//

// This is the generic VM-Exit handler. Decode the reason for the exit and

// call the appropriate handler. As per Intel specifications, given that we

// have requested no optional exits whatsoever, we should only see CPUID,

// INVD, XSETBV and other VMX instructions. GETSEC cannot happen as we do

// not run in SMX context.

//

switch (VpState->ExitReason)

{

case EXIT_REASON_CPUID:

ShvVmxHandleCpuid(VpState);

break;

case EXIT_REASON_INVD:

ShvVmxHandleInvd();

break;

case EXIT_REASON_XSETBV:

ShvVmxHandleXsetbv(VpState);

break;

case EXIT_REASON_VMCALL:

case EXIT_REASON_VMCLEAR:

case EXIT_REASON_VMLAUNCH:

case EXIT_REASON_VMPTRLD:

case EXIT_REASON_VMPTRST:

case EXIT_REASON_VMREAD:

case EXIT_REASON_VMRESUME:

case EXIT_REASON_VMWRITE:

case EXIT_REASON_VMXOFF:

case EXIT_REASON_VMXON:

ShvVmxHandleVmx(VpState);

break;

default:

break;

}

//

// Move the instruction pointer to the next instruction after the one that

// caused the exit. Since we are not doing any special handling or changing

// of execution, this can be done for any exit reason.

//

VpState->GuestRip += ShvVmxRead(VM_EXIT_INSTRUCTION_LEN);

__vmx_vmwrite(GUEST_RIP, VpState->GuestRip);

}

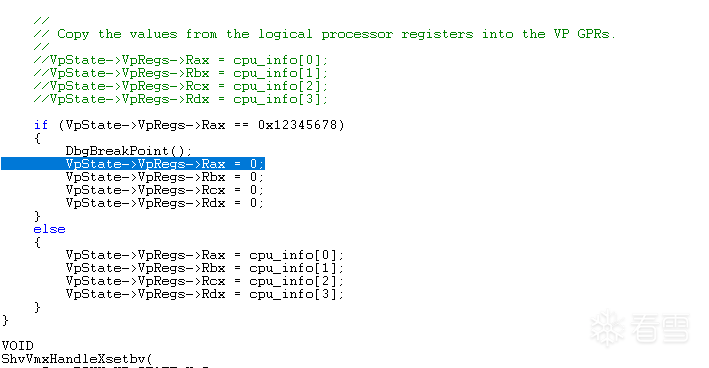

继续进入ShvVmxHandleCpuid我修改了这个方法, 让其实现拦截 cpuid 时若 rax=0x12345678 则将返回结果置零

if (VpState->VpRegs->Rax == 0x12345678)

{

DbgBreakPoint();

VpState->VpRegs->Rax = 0;

VpState->VpRegs->Rbx = 0;

VpState->VpRegs->Rcx = 0;

VpState->VpRegs->Rdx = 0;

}

else

{

VpState->VpRegs->Rax = cpu_info[0];

VpState->VpRegs->Rbx = cpu_info[1];

VpState->VpRegs->Rcx = cpu_info[2];

VpState->VpRegs->Rdx = cpu_info[3];

}

测试在第六步进行...

第六步: 调试

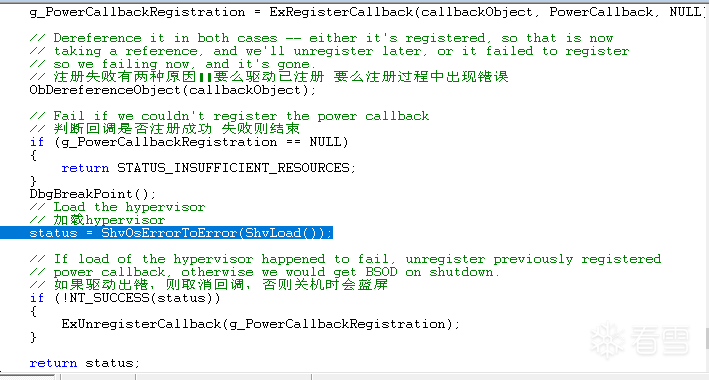

调试模式走一下开始进入ShvLoad()  进入

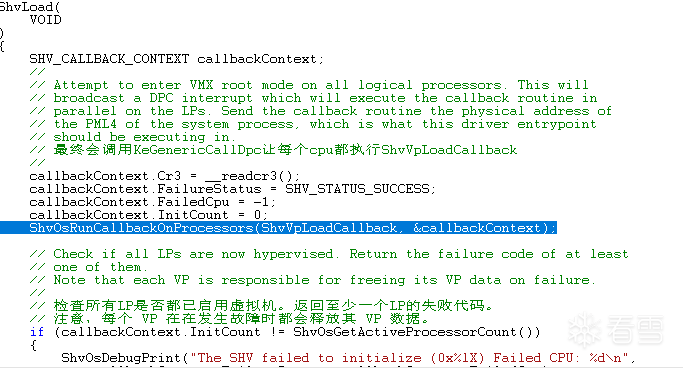

进入ShvVpLoadCallback回调  检测环境

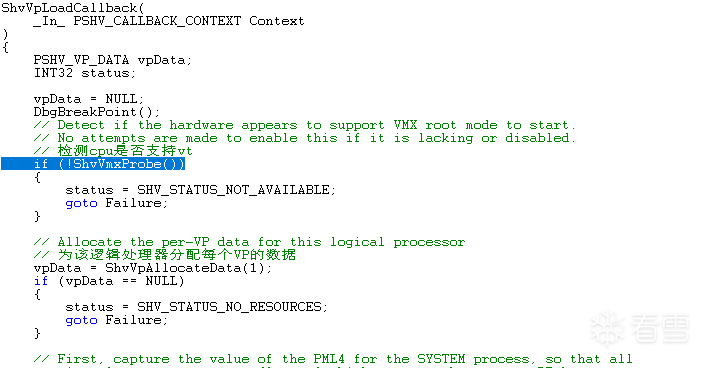

检测环境  分配内存

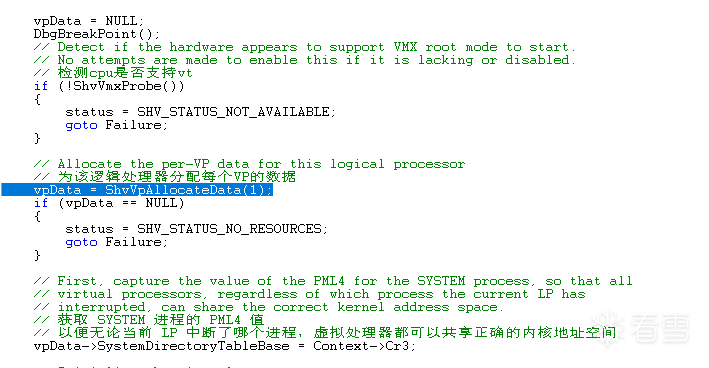

分配内存  填充数据以及启动vt

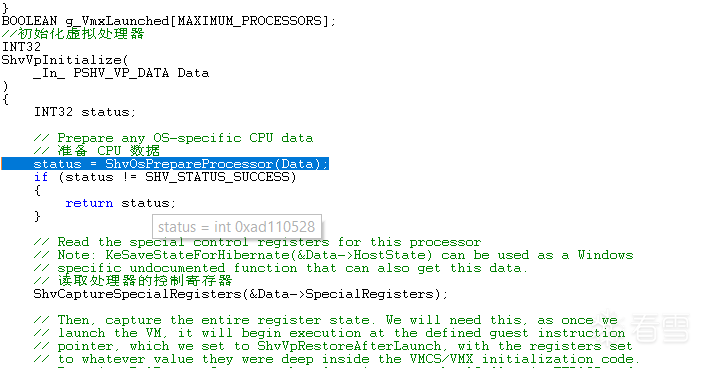

填充数据以及启动vt  准备cpu数据

准备cpu数据  获取寄存器数据

获取寄存器数据  保存context

保存context  进入启动vt

进入启动vt  继续进入

继续进入  vmxon

vmxon  __vmx_vmclear & __vmx_vmptrld

__vmx_vmclear & __vmx_vmptrld  填充各种段寄存器

填充各种段寄存器  填充gdt|idt

填充gdt|idt  填充cr0 cr3 cr4 ddr7

填充cr0 cr3 cr4 ddr7  设置rsp rip

设置rsp rip  进入ShvVmxLaunch

进入ShvVmxLaunch

进入ShvVpRestoreAfterLaunch

进入ShvVpRestoreAfterLaunch

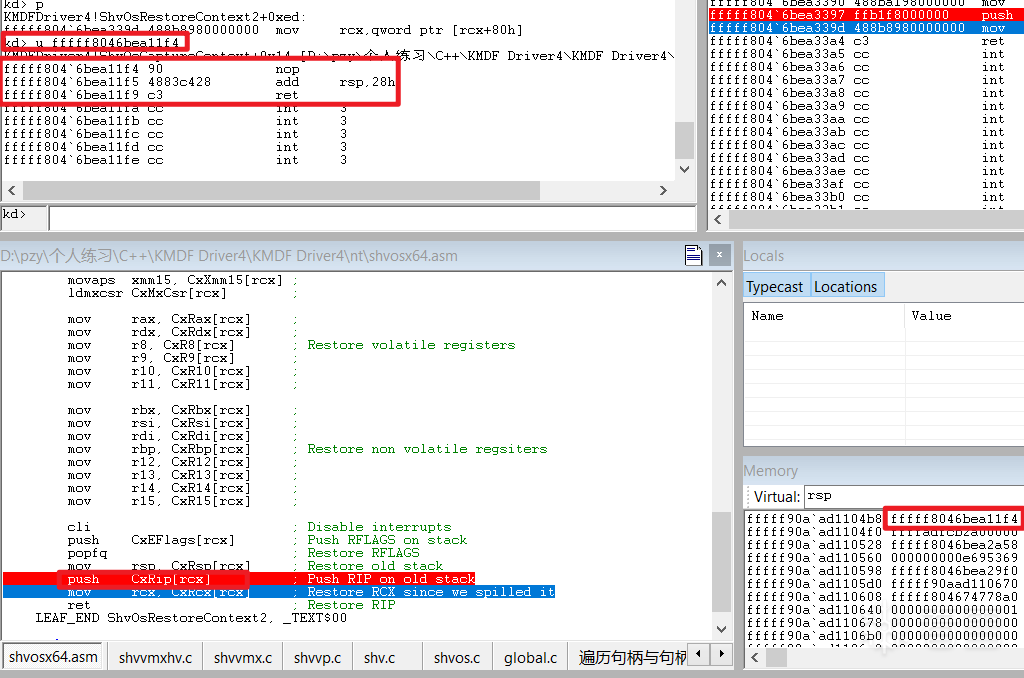

进入ShvOsRestoreContext2

进入ShvOsRestoreContext2  修改返回地址

修改返回地址  从ShvOsCaptureContext返回继续返回ShvVpInitialize

从ShvOsCaptureContext返回继续返回ShvVpInitialize

继续返回ShvVpLoadCallback

继续返回ShvVpLoadCallback  紧接着系统被接管

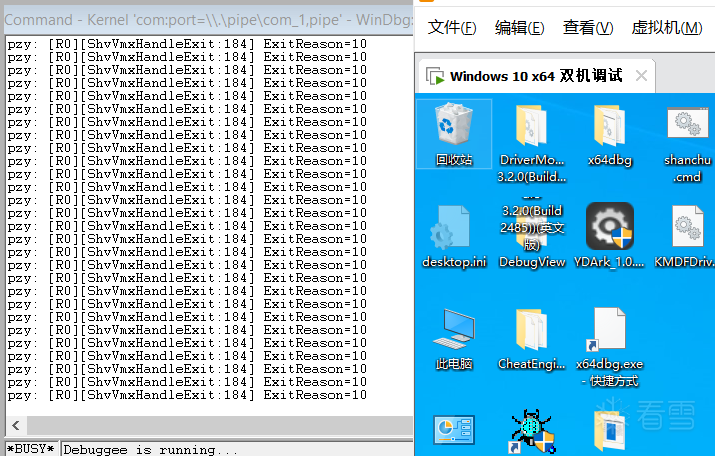

紧接着系统被接管  可以看到大量的reason全是10 为拦截cpuid

可以看到大量的reason全是10 为拦截cpuid  打开调试器, 插入 cpuid 指令, 并将 rax 设置为 0x12345678 进行测试

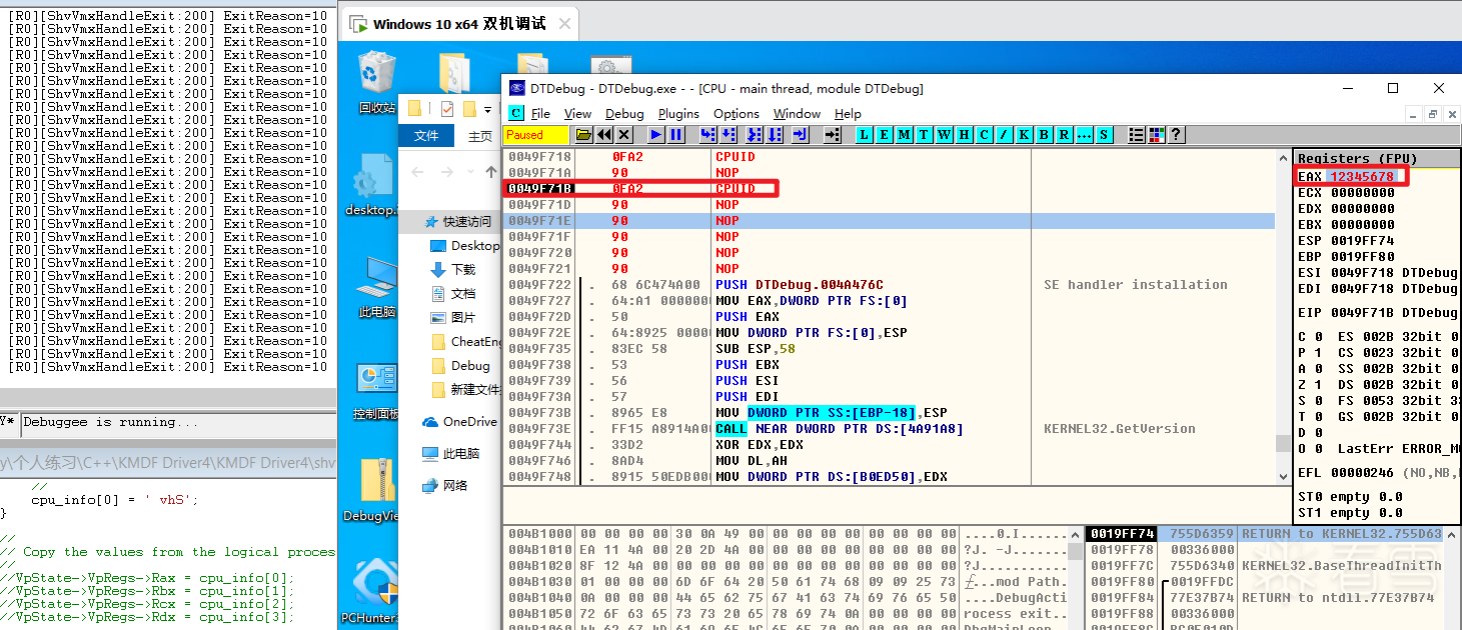

打开调试器, 插入 cpuid 指令, 并将 rax 设置为 0x12345678 进行测试  执行后成功在断点处断下

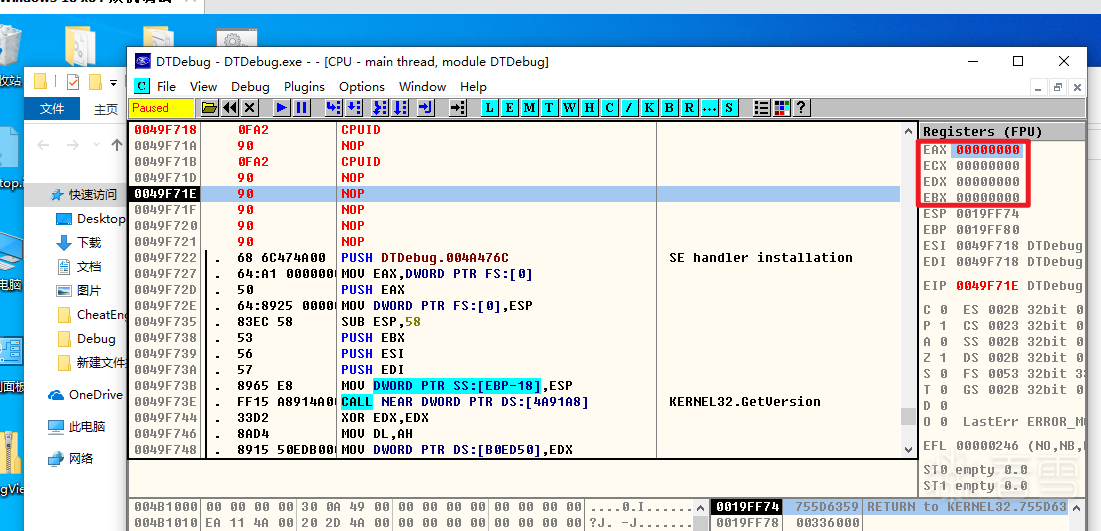

执行后成功在断点处断下  结果显示 rax rbx rcx rdx 已被置零

结果显示 rax rbx rcx rdx 已被置零  测试成功

测试成功

总结

由于本人近期较忙所以本次测试vt做的较为仓促, 如有错漏请留言, 欢迎大家互相讨论

赞赏

- [分享]简单编译器实现 1524

- [分享]Lexer简单词法解析器入门 894

- [分享]vm解释器入门2 991

- [分享]vm解释器入门 1255

- [分享]混淆、加密、反沙箱概念介绍 1289